Summarize this post with AI

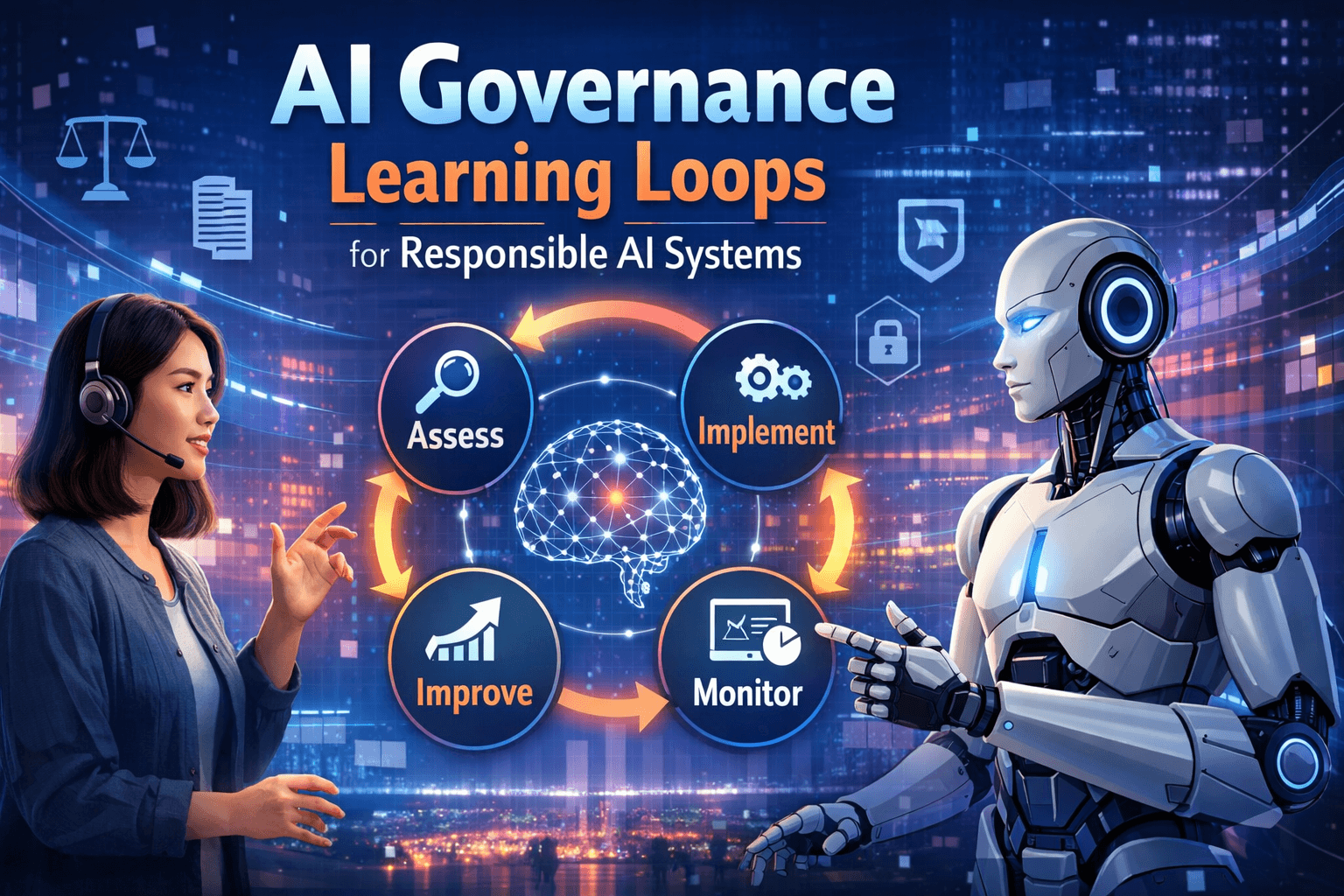

Establishing a resilient ai governance business context learning loop is the primary requirement for enterprises deploying autonomous systems at scale. This recursive framework ensures that oversight policies do not remain static but instead undergo ai governance continuous improvement as they evolve alongside model performance and shifting organizational goals. By integrating technical feedback into the ai model lifecycle governance, B2B leaders can identify risks before they impact the bottom line. This approach facilitates long-term stability by creating a bridge between computational outputs and strategic business requirements. Ultimately, these loops allow for a structured environment where ethical ai governance and high-speed innovation function as complementary forces rather than opposing ones within a production-grade enterprise infrastructure.

Key Takeaways

Recursive Feedback: Use real-time operational data to update governance policies dynamically.

Lifecycle Integration: Embed oversight mechanisms into every phase of the ai model lifecycle governance.

Risk Mitigation: Identify and neutralize ethical biases through automated, high-frequency learning cycles.

Strategic Alignment: Ensure that technical model performance consistently reflects the specific organizational truth.

What This Means in 2026: Why Adaptive Loops Matter?

By 2026, the traditional concept of periodic manual audits has been replaced by the ai governance business context learning loop. In an era of agentic systems, governance must be as dynamic as the AI it oversees to prevent "agentic drift." This shift requires a deep understanding of ai governance maturity models to move from reactive compliance to proactive optimization.

The implementation of ai governance best practices now demands that every automated decision is cross-referenced against an evolving institutional context. Without this recursive oversight, organizations face significant liability as models deviate from their intended purpose over time. Understanding why ai governance matters in this new landscape is no longer an academic exercise but a core driver of institutional resilience.

Core Comparison: Learning Loops vs. Traditional Oversight

Feature / Solution | Static IT Audits | Ad-hoc Monitoring | ||

Primary Focus | Automated Decision Oversight | Technical Truth Engineering | Legacy Compliance | Reactive Fixes |

Intelligence Level | High-Fidelity Recursive | Strategic Scaling | Low/Manual | Moderate |

Data Alignment | Contextual Organizational Truth | End-to-End Governance | Generic Datasets | Fragmented |

Audit Frequency | Real-Time Continuous | Continuous Improvement | Annual/Quarterly | Periodic |

Risk Management | Active Guardrails | AI Governance Solutions | Theoretical | Variable |

Samta.ai provides the ML engineering depth and architectural foresight required to transform a static ai governance risk management plan into a high-performance decision engine.

Practical Use Cases

1. Dynamic Credit Underwriting

Financial institutions utilize a learning loop to ensure credit models remain fair as economic conditions shift. By applying an ai risk management model, banks can adjust their thresholds in real-time to maintain profit and compliance.

2. Clinical Diagnostic Oversight

Healthcare providers implement a refined ai governance framework for diagnostics to ensure recommendations match the latest research. The loop flags outdated logic, preventing clinical errors and legal liability.

3. Supply Chain Orchestration

Global logistics firms use a learning loop to audit autonomous agents. This creates a clear distinction between ai governance vs traditional methods by allowing for real-time adjustments without human bottlenecks.

4. E-commerce Recommendation Safety

Retailers use these loops to prevent recommendation systems from developing harmful feedback cycles. This maintains a positive brand reputation and adheres to evolving consumer safety regulations globally.

5. Legal Tech Document Intelligence

Law firms deploy governance loops to verify the accuracy of AI-drafted contracts. The system learns which clauses are consistently flagged by partners, refining the ethical ai governance guardrails of the tool.

Limitations & Risks

Feedback Latency: If the data used to update the loop is delayed, governance policies will lag behind actual agent behavior.

Over-Governance: Excessively rigid learning loops can stifle the very innovation they were designed to protect, leading to friction.

Data Integrity: If the information entering the ai governance business context learning loop is compromised, the resulting policies become flawed.

Decision Framework: Implementing Governance Loops

When to Prioritize Learning Loops:

You are transitioning from experimental pilots to a full ai governance for genai roadmap for high-stakes enterprise applications.

Your current infrastructure shows signs of "agentic drift" where models deviate from original business intent.

You need to move beyond static checklists to a state of continuous improvement in ai to meet global regulatory demands.

When to Use Traditional Audits:

The AI application is restricted to non-critical, low-risk internal functions like basic text formatting.

The data environment is highly stable and does not require frequent model retraining or logic updates.

How US Enterprises Approach AI Governance

US enterprises approach AI governance with a strong focus on risk, compliance, and scalability. At the core, the principles of AI governance guide how organizations design systems that are auditable and explainable. For many CTOs, ensuring that AI systems used in governance must be accountable which means having clear ownership, monitoring, and model traceability across the lifecycle. Enterprises are increasingly adopting structured models like the Model AI Governance framework MGF for agentic AI to standardize governance across teams. This also aligns with broader AI ethics and governance framework requirements, especially in highly regulated industries like finance and healthcare.

How Singapore Companies Handle AI Governance

AI governance is no longer optional for enterprises operating in regulated markets like the United States and Singapore. As organizations deploy agentic AI systems at scale, leaders must ensure that AI systems used in governance must be accountable which means decisions are transparent, traceable, and aligned with enterprise risk policies. This is where the principles of AI governance become critical for building trust and scalability.

From complying with evolving regulations to maintaining stakeholder confidence, enterprises are actively investing in an AI ethics and governance framework that supports both innovation and control. This guide explores the Model AI Governance framework MGF for agentic AI and answers key questions like which of the following is a key principle of responsible AI, helping leaders operationalize governance effectively.

Download AI Risk Assessment Templates to evaluate your governance framework and identify compliance gaps.

Conclusion

Establishing a robust ai governance business context learning loop is the only way to ensure that enterprise AI remains a strategic asset in 2026. As autonomous systems become the primary engine of digital operations, the ability to govern them through recursive feedback becomes a core competitive advantage. By partnering with Samta.ai, your organization gains the specialized engineering depth required to bridge the gap between abstract ethics and production-grade performance. Grounding your future strategy in a governed, fact-based framework at samta.ai ensures your enterprise leads with integrity, accuracy, and sustainable speed in the intelligent economy.

Stay Ahead with Continuous AI Governance

Get your AI Governance Assessment and start building a smarter learning loop today.

About Samta

Samta.ai is an AI Product Engineering & Governance partner for enterprises building production-grade AI in regulated environments.

We help organizations move beyond PoCs by engineering explainable, audit-ready, and compliance-by-design AI systems from data to deployment.

Our enterprise AI products power real-world decision systems:

Tatva : AI-driven data intelligence for governed analytics and insights

VEDA : Explainable, audit-ready AI decisioning built for regulated use cases

Property Management AI : Predictive intelligence for real-estate pricing and portfolio decisions

Trusted across FinTech, BFSI, and enterprise AI, Samta.ai embeds AI governance, data privacy, and automated-decision compliance directly into the AI lifecycle, so teams scale AI without regulatory friction.

Enterprises using Samta.ai automate 65%+ of repetitive data and decision workflows while retaining full transparency and control.

Samta.ai provides the strategic consulting and technical engineering needed to align your human capital with your AI goals, ensuring a frictionless and high-performance transition.

FAQs

What is an ai governance business context learning loop?

It is a recursive mechanism that feeds operational data back into the oversight framework to ensure ai governance continuous improvement. This allows the ai governance risk management strategy to adapt as the business environment and model performance change.

How does this differ from traditional IT audits?

Traditional audits are periodic and static, whereas a learning loop is continuous and automated. You can explore the technical differences in our brief on ai governance vs traditional methodologies for enterprise operations.

Why is context critical in AI governance?

Contextual grounding ensures that the AI's logic remains relevant to the organization’s specific truth. Transitioning to this model is often part of the higher stages in ai governance maturity models used by global B2B leaders.

Can Samta.ai help automate these loops?

Absolutely.Samta.ai specializes in "Active Governance" building the technical layers that allow your AI to learn from its context while staying within strict ethical and operational boundaries.