Summarize this post with AI

The future of AI governance is no longer a distant policy debate, it's a boardroom priority shaping how organizations build, deploy, and scale artificial intelligence today. As AI systems make decisions that affect credit approvals, hiring outcomes, and medical diagnoses, the stakes of getting governance wrong have never been higher.In this guide, we cover everything you need to know: what AI governance actually means, how global frameworks are evolving, and the concrete steps your organization can take to stay compliant and competitive. Whether you're a compliance officer, a business leader, or a student entering the field, this article gives you a practical, forward looking roadmap.

What is AI Governance?

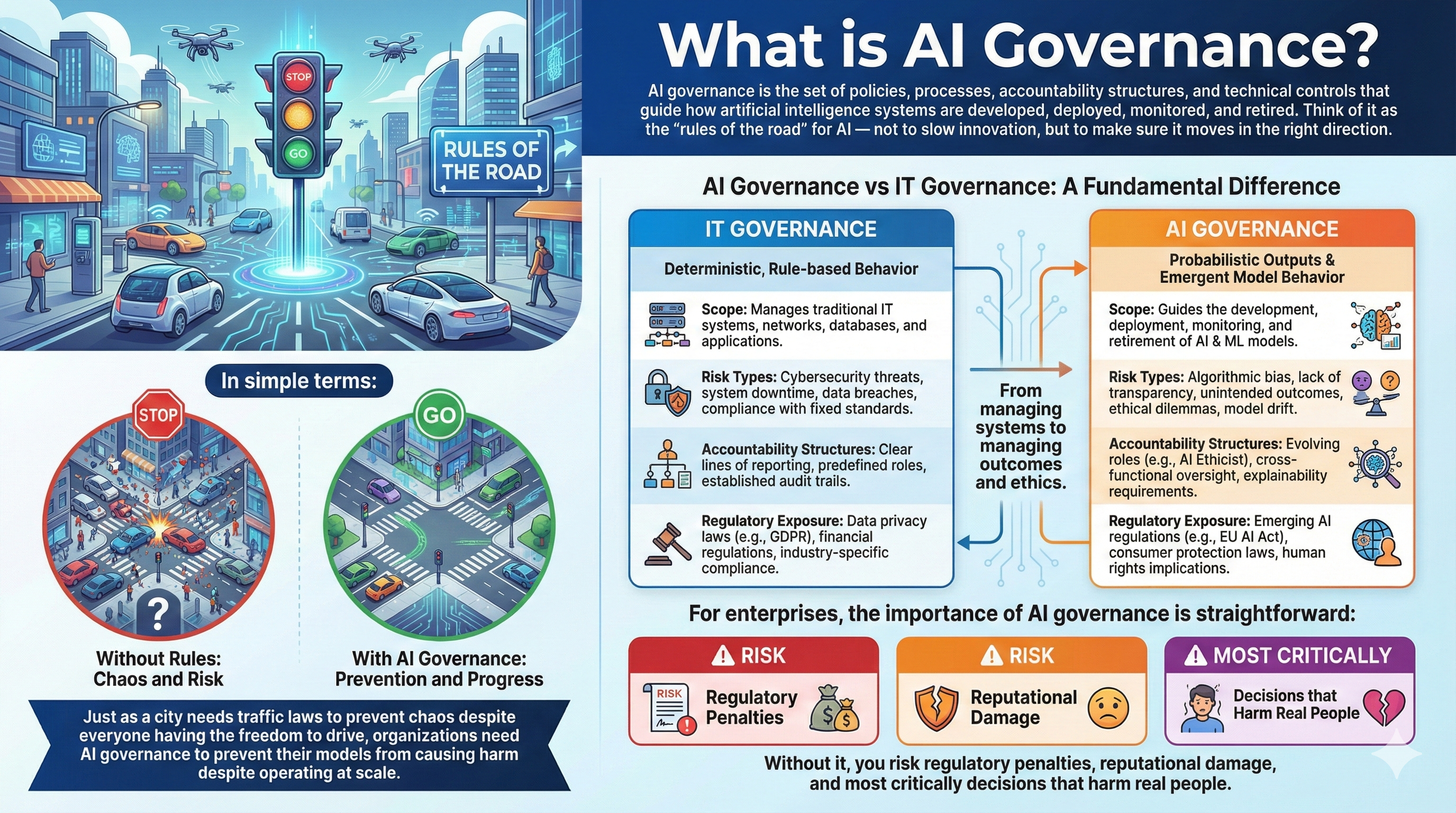

AI governance is the set of policies, processes, accountability structures, and technical controls that guide how artificial intelligence systems are developed, deployed, monitored, and retired. Think of it as the "rules of the road" for AI — not to slow innovation, but to make sure it moves in the right direction. In simple terms: just as a city needs traffic laws to prevent chaos despite everyone having the freedom to drive, organizations need AI governance to prevent their models from causing harm despite operating at scale. AI governance differs from traditional IT governance in a fundamental way. IT governance manages systems with deterministic, rule based behavior. AI governance must account for probabilistic outputs, emergent model behavior, and ethical risk areas where a firewall policy simply does not apply. For enterprises, the importance of AI governance is straightforward: without it, you risk regulatory penalties, reputational damage, and most critically decisions that harm real people.

Enterprises treating governance as a competitive advantage are already ahead. Learn how leading organizations operationalize this in Enterprise AI Governance: The 2026 Executive Playbook

To understand how AI governance differs from traditional systems, read our detailed breakdown on AI Governance vs Traditional IT Governance: 7 Critical Differences

The Evolution of AI Governance

AI governance did not emerge overnight. Its evolution tracks closely with how powerful and consequential — AI systems have become.

Pre-2020: The Ethics Discussion Phase: Governance conversations were largely academic. Principles like fairness, transparency, and accountability were proposed by organizations like the EU's High Level Expert Group on AI and Google's internal AI ethics board. Enforcement mechanisms were virtually nonexistent.

2020–2023: Regulatory Acceleration: The EU began drafting what would become the AI Act. The US released its Blueprint for an AI Bill of Rights. NIST published its AI Risk Management Framework (AI RMF). ISO began developing the ISO/IEC 42001 standard for AI management systems. Real regulatory teeth started to appear.

2024–2026: Formal Global Policy Adoption: The EU AI Act entered into force in 2024, with phased compliance deadlines. Multinational companies began appointing dedicated AI Governance Officers. Global standards bodies started pushing for cross border alignment. We are living in this phase now.

The evolution of AI governance is moving from voluntary principles to mandatory compliance and that shift is accelerating.

🔗 [EXTERNAL LINK: EU AI Act official policy document] 🔗 [EXTERNAL LINK: NIST AI Risk Management Framework]

Why the Future of AI Governance Matters

What happens when an AI system makes a biased lending decision at scale, across millions of applicants? Or when a hiring algorithm systematically screens out qualified candidates from underrepresented groups? The answer is not hypothetical. It is already happening.

The future of AI governance matters because the risks are no longer theoretical.

Economically, AI governance is becoming a market differentiator. Organizations with mature governance frameworks are better positioned to enter regulated markets, attract enterprise clients, and avoid costly remediation.

From a national security standpoint, ungoverned AI in defense, surveillance, and critical infrastructure creates systemic vulnerabilities that no single organization can manage alone.

For enterprise risk management, AI models embedded in core business processes underwriting, fraud detection, customer scoring carry regulatory and reputational exposure that traditional IT risk frameworks were not designed to handle.

The hardest question the field is grappling with: can innovation survive overregulation? Based on industry research, the answer depends almost entirely on how governance is designed as a constraint or as an enabler. The organizations thriving today treat governance as a competitive advantage, not a compliance tax.

📥 Download our AI Governance Implementation Checklist to put these frameworks into action immediately.

Core Pillars of AI Governance

Effective AI governance is not monolithic. It rests on five interlocking pillars, each addressing a distinct dimension of responsible AI deployment.

Risk Management & Accountability

Every AI system carries risk from model drift to adversarial manipulation. This pillar defines who owns that risk, how it is measured, and what triggers escalation. Accountability chains must extend from data scientists to C suite executives.

Transparency & Explainability

Stakeholders whether regulators, customers, or internal auditors , need to understand why an AI system made a particular decision. Explainability is not just ethical; in regulated industries, it is a legal requirement under frameworks like GDPR and the EU AI Act.

Bias Mitigation & Fairness

AI systems trained on historical data can encode and amplify systemic bias. This pillar requires continuous bias testing across demographic groups, with documented remediation protocols when disparate impact is detected.

Data Privacy & Security

AI models consume massive amounts of data, often including personally identifiable information. Governance must ensure data minimization, purpose limitation, and secure handling throughout the AI lifecycle not just at the point of collection.

Monitoring & Continuous Oversight

Models degrade. Data distributions shift. Regulatory requirements evolve. Continuous monitoring ensures that an AI system performing well at launch continues to perform safely and compliantly over time.

These pillars define the foundation, but governance maturity evolves over time. Explore how organizations scale governance capabilities in AI Governance Maturity Models: From Ad Hoc to Optimized

AI Governance Frameworks and Global Regulations

The ai regulatory landscape is fragmented but convergence is underway. Here are the frameworks every governance professional needs to understand:

EU AI Act : The world's first comprehensive AI regulation. It categorizes AI systems by risk level (unacceptable, high, limited, minimal) and imposes strict requirements on high risk systems including conformity assessments, transparency obligations, and human oversight mandates.

US NIST AI RMF: A voluntary but widely adopted framework organizing AI risk management around four functions: Govern, Map, Measure, and Manage. It has become the de facto standard for US federal agencies and many enterprises.

ISO/IEC 42001 : The international standard for AI management systems. It provides a certifiable framework analogous to ISO 27001 for information security, giving organizations a structured path to demonstrable AI governance maturity.

OECD AI Principles: Adopted by 46 countries, these principles establish baseline expectations for trustworthy AI including transparency, accountability, and robustness. They have heavily influenced national regulatory approaches.

Singapore Model AI Governance Framework: One of the most pragmatic and business friendly governance models globally. Singapore's approach emphasizes internal governance structures, risk proportionate controls, and industry specific guidance, making it a useful reference for Asia Pacific operations.

Understanding the future of global ai governance means tracking how these frameworks converge or conflict as organizations operating across borders must navigate multiple overlapping regimes simultaneously. Navigating multiple frameworks can be complex. Here’s how enterprises align governance with regulatory requirements: AI Governance Compliance in Regulated Industries

How US Enterprises Use AI Governance Frameworks

US enterprises treat the AI governance framework as a core risk and compliance layer integrated with business strategy. Large organizations align their AI governance frameworks with standards like NIST AI RMF while adapting to sector-specific regulations across finance, healthcare, and insurance. Decision-making involves CTOs, Chief Risk Officers, and legal teams, ensuring governance is embedded early in the AI lifecycle. At scale, enterprises invest in governance platforms, audit trails, and monitoring systems turning the AI governance framework into a strategic enabler, not just a compliance requirement.

How Singapore Companies Implement AI Governance Frameworks

Singapore organizations adopt a structured and business-friendly AI governance framework, guided by the Model AI Governance Framework from PDPC and MAS principles. This approach focuses on practical implementation, helping companies operationalize governance while scaling innovation across Southeast Asia. For enterprises evaluating what is AI governance framework in practice, Singapore provides one of the most actionable models globally combining explainability, accountability, and risk-based controls.

Download AI Risk Assessment Templates to identify governance gaps and align with US & Singapore compliance frameworks.

The Complete 7-Step AI Governance Implementation Process

In our experience working with AI governance teams across industries, the difference between organizations that govern AI effectively and those that struggle comes down to process discipline. Here is a proven seven step implementation framework.

Step 1: AI Risk Assessment

What it is: A systematic inventory and risk scoring of all AI systems in use or under development, categorized by potential harm, affected populations, and regulatory exposure.

Why it matters: You cannot govern what you have not mapped. Many enterprises discover in this step that shadow AI — models deployed by business units without central oversight represents their largest governance gap.

Tools used: NIST AI RMF mapping tools, risk register templates, model cards.

Common challenges: Incomplete model inventories; business unit resistance to disclosure; unclear ownership of legacy models.

Best practices: Use a tiered risk taxonomy aligned to the EU AI Act's risk categories. Assign a model owner to every system. Require disclosure as a condition of production deployment.

Real world example: A large European bank conducted an AI risk assessment and discovered 47 models operating without documented owners or risk classifications. The assessment became the foundation of a two year governance remediation program.

For a deeper strategic approach to risk and governance alignment, explore Strategic AI Governance for Enterprise Risk Management

Step 2: Policy Development

What it is: The creation of formal AI governance policies covering acceptable use, prohibited applications, data handling, model documentation standards, and incident response.

Why it matters: Policies translate principles into enforceable expectations. Without them, governance decisions are made ad hoc , inconsistently and without accountability.

Tools used: Policy management platforms, legal review workflows, stakeholder consultation frameworks.

Common challenges: Policies written in isolation from technical teams; overly rigid language that cannot adapt to rapid AI evolution; poor adoption due to lack of training.

Best practices: Codevelop policies with legal, technical, and business teams. Build in annual review cycles. Write policies at a level of specificity that enables compliance testing.

Real world example: A Fortune 500 retailer revised its acceptable use policy after deploying a customer sentiment analysis tool that inadvertently captured protected health information. Policy gaps cost them 14 months of remediation effort.

Step 3: Governance Structure Creation

What it is: Establishing the organizational roles, committees, and decision making processes that make AI governance operational not just aspirational.

Why it matters: Governance without structure is just documentation. Someone must own accountability, escalation paths must exist, and cross functional oversight must be institutionalized.

Tools used: RACI matrices, AI governance committee charters, escalation frameworks.

Common challenges: Governance structures that exist on paper but lack authority; unclear division of responsibility between legal, IT, and business; insufficient executive sponsorship.

Best practices: Establish a cross functional AI Governance Committee with representation from legal, compliance, data science, and business. Appoint a dedicated AI Governance Officer or equivalent.

Governance structures must align with real business workflows. Learn how context-driven governance works: AI Governance in Business Context

Step 4: Model Documentation & Audit Trails

What it is: Comprehensive documentation of model development decisions, training data provenance, performance benchmarks, limitations, and intended use cases & maintained as a living audit trail.

Why it matters: Regulators increasingly require documentation as evidence of due diligence. Internally, documentation enables faster incident response and model reuse.

Tools used: Model cards, datasheets for datasets, MLflow, model registries.

Common challenges: Documentation treated as a post hoc exercise rather than embedded in the development lifecycle; inconsistent formats across teams; version control gaps.

Best practices: Make documentation a gate condition for model approval. Standardize on model card templates. Require documentation updates for every material model change.

Step 5: Bias & Fairness Testing

What it is: Systematic evaluation of model outputs across demographic groups to identify and quantify disparate impact, followed by documented remediation.

Why it matters: Bias in AI systems is not always intentional, it is often structural, embedded in historical training data. Without testing, organizations deploy harm unknowingly.

Tools used: IBM AI Fairness 360, Aequitas, Credo AI, Holistic AI.

Common challenges: Defining fairness metrics appropriate to the use case (there are mathematically incompatible definitions of fairness); lack of demographic data for testing; organizational reluctance to surface findings.

Best practices: Test for multiple fairness criteria simultaneously. Document the rationale for the metric selected. Treat bias findings as engineering problems, not public relations problems.

Real world example: Singapore's Personal Data Protection Commission investigated a hiring algorithm that rejected significantly higher proportions of female applicants for technical roles. The case highlighted the gap between testing at development time and monitoring in production.

See how organizations handle bias in real-world deployments: AI Governance Case Study: Real-World Implementation Insights

Step 6: Regulatory Alignment

What it is: Mapping your AI systems and governance processes against applicable regulatory requirements EU AI Act, GDPR, NIST AI RMF, sector specific rules and identifying compliance gaps.

Why it matters: The ai compliance frameworks governing your organization depend on where you operate, what sector you are in, and what risk category your AI systems fall into. Alignment must be jurisdiction specific and continuously maintained.

Tools used: Regulatory mapping matrices, compliance management platforms, external legal counsel.

Common challenges: Multi jurisdictional complexity; rapidly evolving regulatory guidance; difficulty translating technical model behavior into legal compliance language.

Best practices: Build a regulatory horizon scanning function. Engage regulators proactively where sandbox programs exist. Use ISO/IEC 42001 certification as a baseline compliance signal.

Step 7: Continuous Monitoring

What it is: Ongoing surveillance of model performance, data quality, fairness metrics, and regulatory compliance with automated alerting and defined response protocols.

Why it matters: AI governance is not a project with an end date. Models deployed into dynamic environments will drift. New data will introduce new biases. Regulatory requirements will evolve. Continuous monitoring is what keeps governance alive.

Tools used: ModelOp, IBM Watson OpenScale (now IBM OpenPages AI Governance), Fiddler AI, DataRobot MLOps.

Common challenges: Alert fatigue from poorly calibrated monitoring thresholds; insufficient human review of flagged issues; monitoring gaps for third party or vendor supplied models.

Best practices: Define specific metric thresholds that trigger mandatory review. Assign monitoring ownership clearly. Include third party models in your monitoring scope.

Real world example — Financial Industry: A major UK retail bank implemented continuous monitoring on its mortgage decisioning model and detected a 3.2% drift in approval rates for applicants in specific postcode clusters. Catching it early before a regulatory examination allowed the bank to remediate without enforcement action.

Scaling governance across multiple AI systems requires structured oversight. Learn more: Scaling AI Governance for Enterprise Systems

Book a Free Consultation to assess your current AI governance maturity and build a structured implementation roadmap aligned with global frameworks.

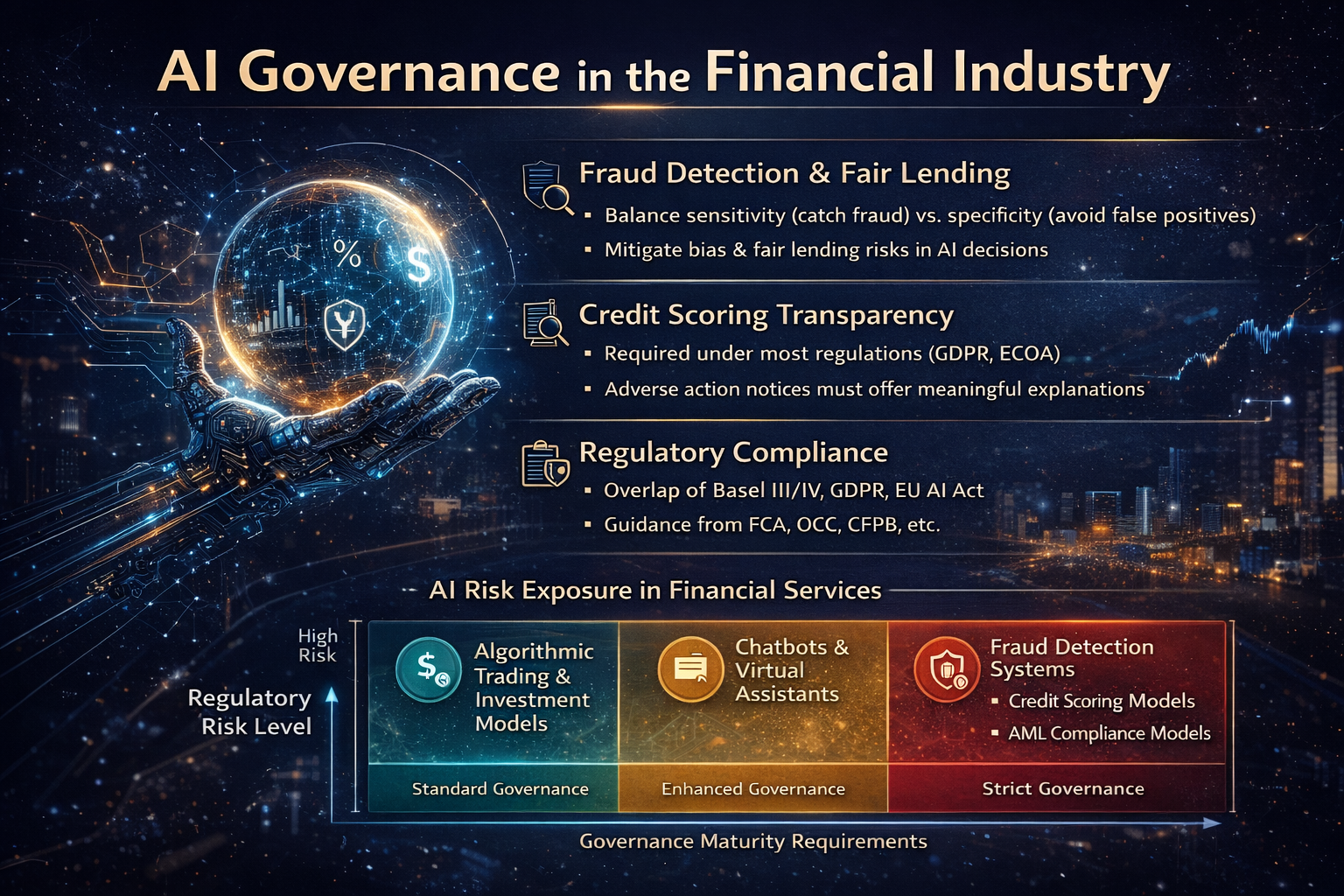

AI Governance in the Financial Industry

The ai governance in the financial industry context is uniquely demanding. Financial AI systems make high stakes decisions credit approvals, fraud flags, insurance pricing affecting millions of consumers, under some of the most mature regulatory frameworks in the world.

Fraud detection governance requires balancing sensitivity (catching fraud) against specificity (avoiding false positives that harm legitimate customers). AI systems optimized purely for detection rates can disproportionately flag customers from certain demographics, creating fair lending exposure.

Credit scoring transparency is now a regulatory requirement in most jurisdictions. Under GDPR, consumers have a right to explanation for automated decisions. Under the Equal Credit Opportunity Act in the US, adverse action notices must be grounded in factors that can be meaningfully explained.

Regulatory compliance in financial AI spans multiple overlapping frameworks: Basel III/IV operational risk requirements, GDPR data governance obligations, the EU AI Act's classification of credit scoring as a high risk AI use case, and sector specific guidance from regulators like the FCA, OCC, and CFPB.

Real world example: Following regulatory scrutiny, a global investment bank restructured its AI governance function to include quarterly model risk committee reviews for all credit related models with mandatory sign off from both the CRO and Chief Compliance Officer before any model modification.

Financial systems require highly contextual governance frameworks. Explore: AI Contextual Governance for Business-Critical Systems

Challenges in Global Governance of AI

The global governance of ai faces structural obstacles that even well resourced organizations struggle to overcome.

Cross border policy differences create compliance complexity for multinational organizations. A model compliant under Singapore's framework may require significant modification to meet EU AI Act requirements and the two frameworks do not always point in the same direction.

Rapid innovation cycles outpace regulatory development. By the time a regulation is drafted, debated, and enacted, the technology it aims to govern has often evolved substantially. Generative AI is the most dramatic recent example.

Enforcement difficulties are significant. Most AI governance regulations depend heavily on self reporting, documentation, and post hoc audits. Real time enforcement of AI system behavior remains technically and legally underdeveloped.

Ethical disagreements across cultures complicate global standards. Concepts of individual privacy, acceptable surveillance, and fairness criteria vary significantly across jurisdictions making universal governance principles difficult to operationalize uniformly.

AI weaponization risks: including autonomous weapons systems, AI enabled disinformation, and adversarial model attacks represent governance challenges that extend beyond enterprise compliance into geopolitical territory.

Real world examples include: the EU's initial attempt to ban real time biometric surveillance being negotiated down to a narrower prohibition; China's generative AI regulations taking a fundamentally different approach to content governance than Western frameworks; and the ongoing international disagreement about what constitutes an "autonomous weapon system" subject to arms control.

Addressing these challenges requires measurable governance performance. Learn what leaders track: AI Governance KPIs: What CTOs Measure

The Future of AI Governance (2026–2030 Trends)

So what is the future of ai governance, concretely? Based on trajectory analysis and emerging policy signals, here are the developments we expect to define the next five years.

Autonomous AI Regulation — Agentic AI systems that take actions, not just make recommendations, will require fundamentally new governance models. Static documentation and periodic audits are insufficient for systems that evolve their own behavior in production.

Real Time AI Auditing — Continuous, automated compliance monitoring will replace periodic manual audits. Regulators in financial services are already piloting supervisory technology that can monitor model behavior directly, rather than reviewing documentation after the fact.

Global AI Treaty Possibilities — International discussions about a binding AI governance treaty analogous to nuclear nonproliferation frameworks — are gaining momentum. The UN's advisory body on AI governance released recommendations in 2024 that explicitly called for multilateral coordination mechanisms.

AI Governance Automation — The irony of the field: AI will increasingly be used to govern AI. Automated policy compliance checking, bias detection, and regulatory mapping will reduce the human overhead of governance while raising new questions about accountability for the governance systems themselves.

Governance for AGI — As systems approach and potentially exceed human level performance across domains, current risk based governance frameworks designed around narrow AI applications , will require fundamental rethinking.

Quantum & Edge AI Implications — Quantum computing could break current cryptographic protections underlying data privacy governance. Edge AI deployment creates governance challenges for systems operating outside centralized monitoring infrastructure.

The future of AI governance belongs to organizations that treat it as a dynamic capability not a compliance checkbox. Those investing in governance infrastructure today are building the foundation for sustainable AI deployment in an increasingly regulated world. As organizations explore what is the future of AI technology, governance frameworks will evolve from static compliance models to real-time, automated oversight systems. Agentic AI systems introduce entirely new governance challenges. Explore the framework here: Agentic AI Governance Framework: Managing Autonomous Systems

📅 Schedule a consultation with our governance experts to assess your organization's AI governance maturity and build your 2026 roadmap.

About Samta

Samta.ai is an AI Product Engineering & Governance partner for enterprises building production-grade AI in regulated environments.

We help organizations move beyond PoCs by engineering explainable, audit-ready, and compliance-by-design AI systems from data to deployment.

Our enterprise AI products power real-world decision systems:

Tatva : AI-driven data intelligence for governed analytics and insights

VEDA : Explainable, audit-ready AI decisioning built for regulated use cases

Property Management AI : Predictive intelligence for real-estate pricing and portfolio decisions

Trusted across FinTech, BFSI, and enterprise AI, Samta.ai embeds AI governance, data privacy, and automated-decision compliance directly into the AI lifecycle, so teams scale AI without regulatory friction.

Enterprises using Samta.ai automate 65%+ of repetitive data and decision workflows while retaining full transparency and control.

FAQs

What is AI governance in simple terms?

AI governance is the system of rules, roles, and processes an organization uses to make sure its AI systems are safe, fair, and compliant with applicable laws. Think of it like a building code for AI iit does not prevent you from building, but it ensures what you build will not collapse and will not harm the people inside. It covers everything from how training data is collected to how model decisions are explained to the people they affect, and who is accountable when something goes wrong.

What is the future of AI governance globally?

The future of global AI governance is moving toward binding international frameworks, real time regulatory oversight, and automated compliance systems. The EU AI Act has established the first comprehensive mandatory regime, and other jurisdictions are developing comparable frameworks. Between 2026 and 2030, we expect to see convergence toward multilateral governance standards, AI assisted regulatory auditing, and new governance models specifically designed for autonomous and agentic AI systems that current frameworks were not built to address.

How does AI governance differ from IT governance?

Traditional IT governance manages deterministic systems ,systems that behave consistently and predictably according to programmed rules. AI governance must manage probabilistic, adaptive systems whose outputs can shift over time, embed bias from training data, and produce decisions that are difficult to explain. IT governance frameworks like COBIT and ITIL provide useful structural models, but they must be significantly extended to address AI specific risks including model drift, fairness obligations, and explainability requirements.

Why is AI governance important for financial institutions?

Financial institutions use AI to make consequential decisions credit approvals, fraud detection, insurance pricing that directly affect consumers' financial lives. Regulators in every major jurisdiction hold financial institutions to high standards of fairness, transparency, and explainability in these decisions. Poor AI governance in financial services creates exposure across multiple dimensions simultaneously: regulatory enforcement actions, civil litigation, reputational damage, and systemic financial risk if AI driven decisions cause correlated failures at scale.

Who is responsible for AI governance in a company?

Responsibility for AI governance should be distributed but clearly defined. The AI Governance Officer or Chief AI Officer typically holds primary accountability. Data scientists and ML engineers are responsible for technical governance controls. Legal and compliance teams handle regulatory alignment. Business unit leaders own the risk associated with AI systems deployed in their domain. Ultimately, the board and C suite are responsible for ensuring the governance function has the authority and resources to operate effectively.

.jpeg&w=3840&q=75)