Summarize this post with AI

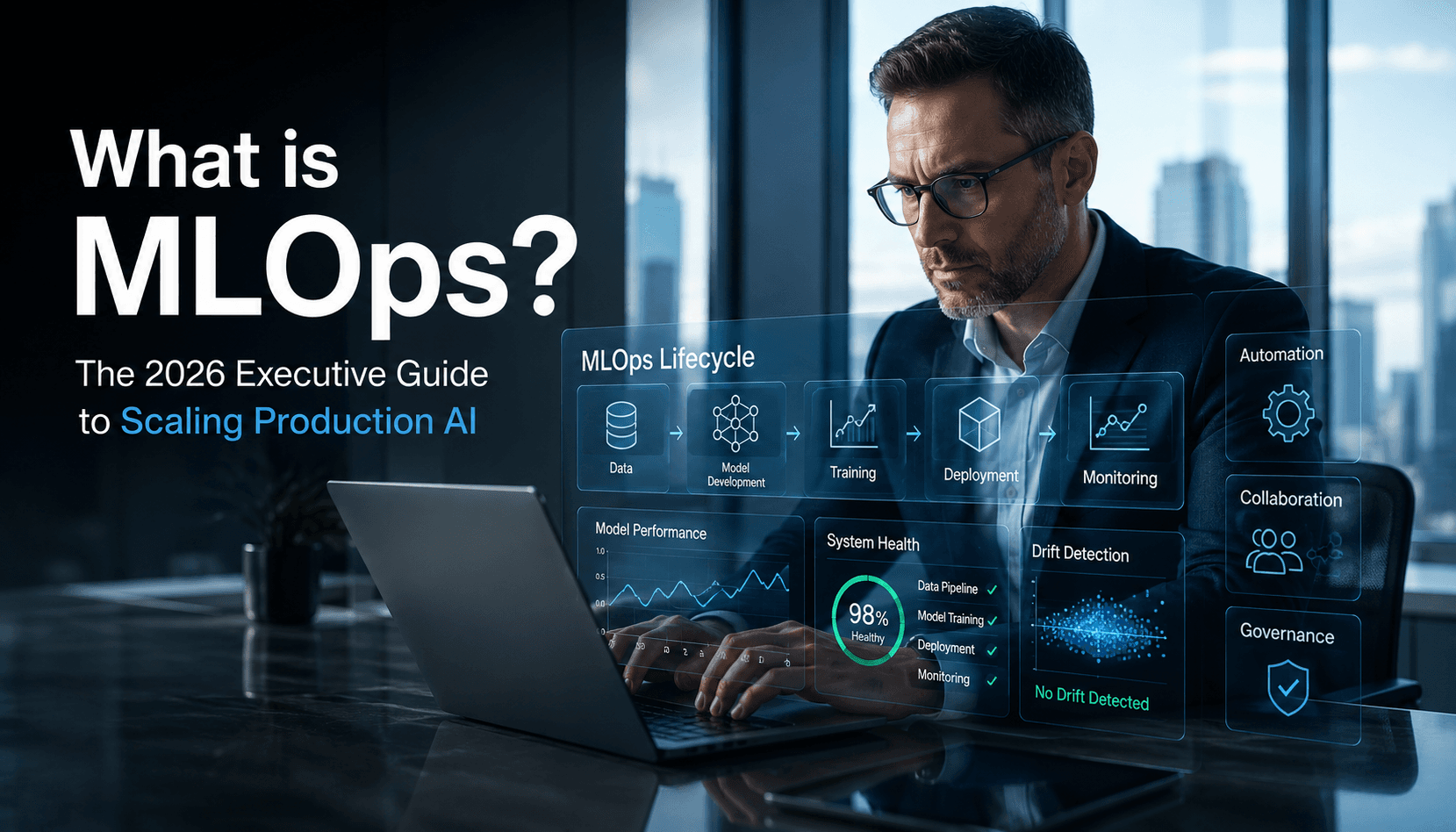

Defining what is mlops, what is aiops and mlops is essential for B2B leaders aiming to scale intelligent systems. MLOps focuses on the machine learning lifecycle, ensuring that model deployment and monitoring remain consistent across production environments. In contrast, AIOps utilizes AI to automate IT operations and infrastructure health. Understanding mlops vs llmops vs aiops is critical for maintaining high-performing architectures, as each discipline addresses a unique layer of the enterprise stack. Ultimately, what is mlops used for centers on bridging the gap between data science and operational reliability to drive business value. Samta.ai provides the platform and expertise to integrate these methodologies into a cohesive, high-ROI ecosystem for modern founders.

Key Takeaways

Standardization: MLOps introduces consistency across the machine learning lifecycle

Operational Efficiency: AIOps automates infrastructure monitoring and incident response

Risk Mitigation: Strong governance is essential for trustworthy ai

Unified Governance: Combining data engineering with MLOps enables scalable AI adoption

What is MLOps and AIOps in 2026?

MLOps has evolved into a critical layer of enterprise AI strategy. It merges DevOps practices with data science workflows, ensuring models are reproducible, auditable, and continuously improving. To understand how MLOps fits into the broader ecosystem, explore the differences in AI lifecycle vs MLOps.

AIOps, on the other hand, focuses on IT operations using machine learning to analyze logs, detect anomalies, and automate system responses. A well-structured AI model lifecycle management strategy ensures both models and infrastructure operate seamlessly together. According to Gartner’s research on AIOps, AIOps significantly reduces mean time to resolution (MTTR) by automating root cause analysis highlighting its growing importance in enterprise IT.

Core Comparison: Defining the Operational Landscape

Feature | MLOps (Machine Learning Ops) | AIOps (IT Operations) | LLMOps (Large Language Model Ops) | Business Impact |

Primary Focus | ML model lifecycle & retraining | IT infrastructure & automation | LLM deployment, prompts & token optimization | End-to-end AI efficiency |

Data Types | Features & labeled datasets | Logs, metrics, traces | Unstructured text, embeddings, vector data | Better data utilization |

Primary Goal | Predictive accuracy & reliability | System uptime & health | Context-aware responses & scalability | Improved decision-making |

Key Benefit | Faster model deployment | Automated incident resolution | Optimized LLM performance & cost | Reduced operational costs |

Core Users | Data scientists, ML engineers | IT ops, DevOps teams | AI engineers, prompt engineers | Cross-functional collaboration |

Tools & Stack | MLflow, Kubeflow, DVC | Splunk, Dynatrace, Moogsoft | LangChain, vector DBs, OpenAI APIs | Faster time-to-market |

Practical Use Cases for Modern Operations

1. Automated Fraud Detection

A robust What is MLOps pipeline enables continuous retraining of fraud detection models using real-time transaction data.

2. Predictive Maintenance

Enterprises leverage mlops for data engineering to process IoT sensor data and predict equipment failures before they happen.

3. IT Incident Response

AIOps platforms detect anomalies in logs and trigger automated responses, reducing downtime without manual intervention.

4. Customer Personalization

Using What is mlops ml ops, recommendation engines evolve dynamically based on user behavior and engagement patterns.

5. Governance and Compliance

Strong governance frameworks supported by AI security and compliance services help organizations meet regulatory requirements.

6. Model Integrity

Continuous AI model monitoring strategies ensure models remain accurate and unbiased over time.

Receive a comprehensive evaluation of your current MLOps maturity and a roadmap for AI implementation. Claim your free assessment report now.

Limitations and Risks

Despite their advantages, these frameworks can introduce complexity if not implemented correctly.

Data Drift & Model Decay: Models lose accuracy over time without monitoring

Over-Automation Risks: Poorly configured AIOps can trigger incorrect actions

Compliance Gaps: Lack of governance can expose enterprises to regulatory risk

Implementing a structured AI risk management model is critical to maintaining control over automated systems.

Decision Framework: When to Implement

You should consider adopting MLOps when:

You have multiple models in production

Continuous retraining is required

Model performance impacts revenue or operations

AIOps becomes essential when:

You manage hybrid or multi-cloud environments

Manual monitoring is no longer scalable

To go a step further, organizations are now building agentic AI engineering architecture to automate decision-making at scale.

Conclusion

Navigating the complexities of what is mlops, what is aiops and mlops requires a strategic balance between automation and oversight. As organizations transition toward autonomous systems, the integration of these frameworks will define operational success. Samta.ai provides the deep technical expertise and platform tools necessary to master the AI and ML landscape. By prioritizing governance and data integrity, leaders can build resilient systems that turn raw data into a sustainable competitive advantage.

Reach out to the Samta.ai team today to bridge the gap between your data science goals and operational excellence. Connect with our experts here.

About Samta

Samta.ai is an AI Product Engineering & Governance partner for enterprises building production-grade AI in regulated environments.

We help organizations move beyond PoCs by engineering explainable, audit-ready, and compliance-by-design AI systems from data to deployment.

Our enterprise AI products power real-world decision systems:

TATVA : AI-driven data intelligence for governed analytics and insights

VEDA : Explainable, audit-ready AI decisioning built for regulated use cases

Property Management AI : Predictive intelligence for real-estate pricing and portfolio decisions

Trusted across FinTech, BFSI, and enterprise AI, Samta.ai embeds AI governance, data privacy, and automated-decision compliance directly into the AI lifecycle, so teams scale AI without regulatory friction.

Enterprises using Samta.ai automate 65%+ of repetitive data and decision workflows while retaining full transparency and control.

Samta.ai provides the strategic consulting and technical engineering needed to align your human capital with your AI goals, ensuring a frictionless.

FAQs

What is the purpose of mlops and trustworthy ai?

The goal is to ensure machine learning systems are efficient, transparent, and aligned with ethical standards. MLOps enables auditability, fairness, and accountability across the lifecycle making AI systems enterprise-ready.

What is mlops agile and how does it work?

What is mlops agile refers to applying agile development principles to machine learning workflows. It focuses on rapid experimentation, continuous feedback loops, and iterative model improvements based on real-world performance.

What are the most common what is mlops tools?

Core what is mlops tools include MLflow for experiment tracking, Kubeflow for orchestration, and DVC for data versioning. These tools are often integrated via data integration consulting services to create a seamless flow from raw data ingestion to production-grade model serving.

What is the difference between MLOps and LLMOps?

While MLOps focuses on structured data models, LLMOps is tailored for large language models. It emphasizes prompt engineering, vector databases, and token optimization, whereas MLOps prioritizes feature engineering and model retraining.