Summarize this post with AI

Most enterprise AI projects don't fail because the technology doesn't work. They fail because organizations weren't ready for it. 70% of AI projects never make it to production and the root cause is almost always the same: no structured ai readiness framework in place before the first line of code was written. An AI readiness framework is the strategic foundation every enterprise needs before committing budget, people, and credibility to AI initiatives. Without it, you're building on sand. In this definitive 2026 guide, we walk you through everything from understanding what AI readiness actually means, to running a full AI readiness assessment, to standing up enterprise-grade governance, risk controls, and monitoring. Whether you're a C-suite executive, IT leader, or data team manager, this post gives you the blueprint to move from "AI curious" to "AI capable" with confidence.

What Is AI Readiness? A Clear 2026 Definition

AI readiness is an organization's demonstrated capacity to design, deploy, govern, and scale artificial intelligence solutions in ways that are technically sound, legally compliant, and aligned with business goals.

It's not just about having a GPU budget or hiring a data scientist. True AI readiness spans five organizational layers: data maturity, technical infrastructure, talent capability, governance structures, and cultural alignment. Miss any one of these, and your AI initiative will stall often in the most expensive way possible.

How AI Readiness Differs from "Digital Readiness"

Many leaders conflate digital transformation readiness with AI readiness. They're related, but not the same. Digital readiness asks: "Can we operate efficiently on digital platforms?" AI readiness asks something harder: "Can we make high-stakes, automated decisions and govern them responsibly?"

The difference matters because AI introduces unique risks that traditional digital systems don't: model drift, algorithmic bias, hallucination, and regulatory liability under frameworks like the EU AI Act. A company can be highly digitally mature and still be completely unready for AI.

Key Takeaway: AI readiness is not a binary state. It's a spectrum and most enterprises in 2026 sit somewhere in the middle. Partially ready in some dimensions, dangerously underprepared in others.

Why Your Enterprise Needs an AI Readiness Framework

Here's a sobering reality from our work with 50+ enterprise clients: organizations that jump into AI without a structured readiness framework spend an average of 2.3× more budget and take 40% longer to reach production compared to those that assess readiness first. Gartner AI Implementation Report.

An ai readiness assessment framework gives your organization three things money can't buy back later: clarity, alignment, and risk awareness.

Clarity: You know exactly where your gaps are before they become expensive problems

Alignment: Every stakeholder from the CFO to the data engineer operates from the same playbook

Risk awareness: You've mapped the technical, ethical, and regulatory risks before they surface in production

Industry Stat: 70% of AI projects fail to reach production. The leading causes: poor data quality (42%), unclear business objectives (38%), and absent governance structures (31%). McKinsey Global AI Survey 2025

The framework isn't bureaucratic overhead. It's the difference between an AI initiative that transforms your business and one that becomes a cautionary case study.

The 6 Core Pillars of an AI Readiness Framework

Every credible ai readiness framework template anchors around six foundational dimensions. Think of these as the load-bearing walls of your AI capability. You can't remove one and expect the structure to hold.

Pillar | What It Measures | Common Gap in 2026 | Quick Win |

1. Data Maturity | Quality, accessibility, and governance of organizational data | Siloed data in legacy systems | Run a data catalog audit |

2. Technical Infrastructure | Cloud, compute, MLOps, and API readiness | No MLOps pipeline; manual deployments | Adopt a managed ML platform |

3. AI Governance | Policies, accountability, and oversight structures | No AI governance policy exists | Draft an AI acceptable use policy |

4. Talent & Skills | In-house AI capability vs. dependency on vendors | Only 1–2 data scientists for the whole org | Upskill domain experts in AI literacy |

5. Business Strategy | Alignment of AI initiatives with measurable business outcomes | AI projects not tied to KPIs | Define ROI metrics before building |

6. Culture & Change Mgmt | Organizational appetite for data-driven decision-making | HiPPO (highest-paid person's opinion) culture | Celebrate data-driven wins publicly |

Pro Tip: Score each pillar on a 1–5 maturity scale. Any pillar scoring below 3 is a critical blocker. Address these before expanding AI scope not after.

For a deeper look at how governance fits into this picture, read our guide on AI Governance in a Business Context.

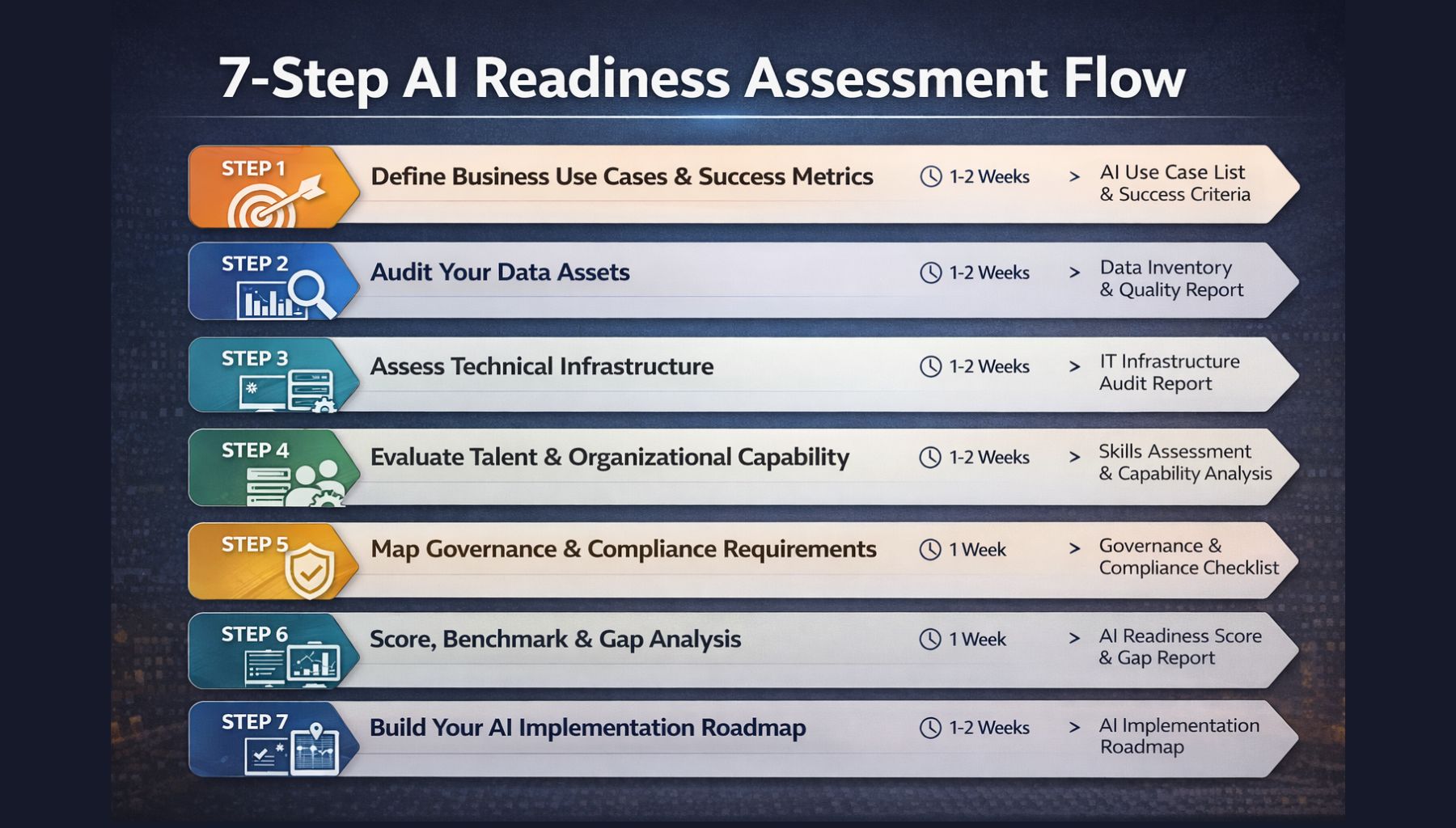

The Complete 7-Step AI Readiness Assessment

An AI readiness assessment isn't a one-time checkbox exercise. It's a structured diagnostic that reveals your exact starting position and maps the fastest route to AI production readiness. Here's the process we use with enterprise clients.

Step 1: Define Business Use Cases & Success Metrics

What it is: Before assessing anything technical, anchor the entire framework in business value. Identify 2–3 high-priority AI use cases with clear, measurable outcomes.

Why it matters: AI without a business problem to solve is just research. Every successful enterprise AI deployment starts with a problem statement, not a technology.

Tools used: Jobs-to-be-Done workshops, Value Stream Mapping, OKR alignment sessions.

Common challenge: Leaders propose too many use cases at once, spreading resources thin. Prioritize ruthlessly pick the use case with the highest business impact and the lowest data complexity.

Real-world example: A mid-size logistics firm wanted AI for 12 different problems simultaneously. After our assessment, we focused them on a single use case predictive shipment delay alerts that reduced customer churn by 18% in 6 months.

Step 2: Audit Your Data Assets

What it is: A structured inventory of all data sources their quality, volume, format, accessibility, and labeling status. This is your AI fuel check.

Why it matters: AI models are only as good as the data they learn from. Garbage in, garbage out is not a cliché it's the most common reason enterprise AI models fail in production.

Tools used: Apache Atlas, Alation, dbt, Microsoft Purview, custom data profiling scripts.

Best practices:

Profile every dataset for completeness, consistency, and recency

Identify PII and apply appropriate data governance policies

Document data lineage from source to consumption layer

Flag datasets that require third-party consent or regulatory clearance

For hands-on help with data infrastructure, explore our Data Integration Consulting Services.

Step 3: Assess Technical Infrastructure

What it is: Evaluate your compute resources, cloud maturity, MLOps pipeline, API layer, and data platform against the demands of the target AI use case.

Why it matters: A machine learning model that works on a data scientist's laptop is not the same as a production-grade AI system serving thousands of requests per second. Infrastructure gaps kill more AI projects than model errors do.

Tools used: AWS SageMaker, Azure ML, Google Vertex AI, MLflow, Kubeflow, Docker/Kubernetes.

Key questions to answer:

Can we retrain models automatically when data drifts?

Do we have CI/CD pipelines for ML model deployment?

Is our API infrastructure capable of real-time inference?

Step 4: Evaluate Talent & Organizational Capability

What it is: Map your existing human capital against the skills required for your target AI use case from data engineers and ML engineers to domain experts and AI ethicists.

Why it matters: According to the World Economic Forum, the global AI talent gap will persist through 2028.

Best practice: Build a skills matrix. Identify which roles you'll hire, which you'll upskill, and which you'll outsource to trusted AI partners.

Step 5: Map Governance & Compliance Requirements

What it is: Identify all applicable regulatory frameworks — EU AI Act, GDPR, HIPAA, CCPA, NIST AI RMF and internal governance requirements that govern your AI use case.

Why it matters: Retrofitting compliance into a deployed AI system costs 5–8× more than building it in from the start. This step is not optional it's foundational.

Explore our AI Security & Compliance Services to understand what compliance-by-design looks like in practice.

Step 6: Score, Benchmark & Gap Analysis

What it is: Compile the findings from Steps 1–5 into a structured maturity scorecard. Benchmark your scores against industry peers using the NIST AI RMF or MIT AI Readiness Index.

Output: A clear readiness score per pillar, a prioritized gap list, and an estimated effort-to-close for each gap.

Step 7: Build Your AI Implementation Roadmap

What it is: Convert the gap analysis into a phased, time-boxed roadmap with owners, milestones, and budget allocations for each AI initiative.

Best practice: Structure the roadmap in three horizons H1 (0–6 months: foundational fixes), H2 (6–18 months: pilot deployments), H3 (18–36 months: scale and expand).

For a detailed breakdown of what each horizon looks like, read our AI Implementation Roadmap for Enterprise.

→ Get Your Free AI Readiness Assessment Report receive your organization's readiness score across all 6 pillars, plus a prioritized action plan, in 48 hours. No commitment required.

Enterprise AI Governance Framework: Structure & Tools

An enterprise-ready ai governance framework is not a single policy document. It's an operating system a set of interconnected policies, roles, tools, and review processes that ensure AI behaves as intended, at scale, over time.

The Three Layers of AI Governance

Layer 1: Strategic Governance Owned by the C-suite and AI steering committee. This layer defines the organization's AI ethics principles, acceptable use policies, and risk appetite. It answers: "What AI should we build, and why?"

Layer 2: Operational Governance Owned by AI/ML engineering teams and data stewards. This layer implements governance as code automated checks embedded in your MLOps pipeline that enforce data quality standards, bias thresholds, and model performance guardrails before any model reaches production. The concept of AI governance as code is one of the most important shifts in enterprise AI practice in 2026. Rather than manual review gates, governance rules are versioned, tested, and enforced automatically just like software tests in a CI/CD pipeline.

Layer 3: Monitoring & Accountability Owned by a cross-functional AI oversight team. This layer handles AI model drift monitoring, bias audit workflows, incident response, and stakeholder reporting. Without this layer, you're flying blind after go-live.

AI Governance Platform Buyer's Guide: Key Tools in 2026

Tool Category | Leading Platforms | Key Capability | What It Enables |

|---|---|---|---|

AI Governance Platforms | Arthur AI, Fiddler AI, Monitaur, ModelOp | Centralized model registry + policy enforcement | Helps organizations manage AI models in one place while enforcing governance policies consistently across the lifecycle |

Model Monitoring | WhyLabs, Arize AI, Evidently AI, Datadog ML | AI model drift monitoring, performance alerts | Enables continuous tracking of model performance, detecting drift and triggering alerts to maintain accuracy in production |

Bias & Fairness Auditing | IBM OpenScale, Holistic AI, Credo AI | AI bias audit playbook automation | Supports identification and mitigation of bias through automated fairness checks and structured audit workflows |

AI Lifecycle Management | MLflow, Weights & Biases, Neptune.ai | AI model lifecycle management end-to-end | Manages the full AI lifecycle including experimentation, versioning, deployment, and tracking of models |

See how our Veda AI Data Analytics Platform integrates governance controls natively into the analytics workflow.

Pro Tip: When evaluating any AI governance platform, prioritize tools that support policy-as-code natively. If your governance depends on a spreadsheet and an email thread, it's not governance it's hope.

AI Risk Management Framework: Controls That Actually Work

The AI risk management framework is where your readiness assessment translates into active protection. Risk in AI falls into four categories and each requires a different control strategy.

The 4 Risk Categories in Enterprise AI

Technical Risk: Model errors, drift, hallucination, infrastructure failures. Mitigation: Continuous AI model drift monitoring, automated retraining pipelines, and AI hallucination risk controls output validation layers, confidence thresholds, and human-in-the-loop checkpoints for high-stakes decisions.

Ethical & Bias Risk: Discriminatory outcomes in hiring, lending, healthcare, or law enforcement AI. Mitigation: Pre-deployment AI bias audit playbook, diverse and representative training data, and ongoing fairness monitoring in production.Regulatory & Legal Risk: Non-compliance with EU AI Act, GDPR, HIPAA, or sector-specific regulations. Mitigation: Compliance mapping before build, legal review gates, and automated compliance checks embedded in your MLOps pipeline.

Reputational Risk: Public trust erosion from AI failures, controversial decisions, or data breaches. Mitigation: Transparent AI decision logs, a clear public AI ethics statement, and a rapid incident response playbook.

AI vs. Traditional Automation: Why the Risk Profile Is Different

Many risk managers apply traditional automation risk frameworks to AI systems. This is a critical mistake. AI vs. traditional automation risk profiles differ in one fundamental way: traditional automation fails predictably it does exactly what it was programmed to do, or it errors out. AI fails probabilistically it can produce wrong answers confidently, in novel situations it was never programmed for.

This means AI risk management must be ongoing, not just pre-deployment. Your framework must include continuous monitoring, anomaly detection, and human oversight loops especially for high-stakes use cases.

Key Takeaway: Traditional IT risk frameworks are necessary but not sufficient for AI. You need an AI-specific risk layer on top of your existing enterprise risk management structure.

EU AI Act & NIST AI RMF: Compliance in 2026

If your organization operates in Europe or processes EU citizens' data, EU AI Act compliance obligations are now fully in force as of mid-2026. This is the world's first comprehensive AI regulation, and it classifies AI systems by risk level from "minimal risk" (no obligations) to "unacceptable risk" (outright banned).

EU AI Act: What Enterprises Must Do Now

Classify all AI systems by risk category (minimal, limited, high, unacceptable)

For high-risk AI systems (hiring, credit scoring, healthcare, critical infrastructure): register in the EU AI database and conduct conformity assessments

Implement human oversight mechanisms for high-risk systems

Ensure transparency users must know when they're interacting with AI

Maintain audit logs for a minimum of 10 years for high-risk deployments

NIST AI RMF Implementation

For US-based enterprises, the NIST AI RMF implementation provides a voluntary but increasingly expected governance standard. The framework's four core functions GOVERN, MAP, MEASURE, MANAGE map directly to the pillars of an enterprise AI readiness framework. Organizations that implement NIST AI RMF before regulatory pressure arrives are 60% faster to achieve compliance when regulations formally hit.

Top Challenges in Enterprise AI Adoption

Understanding the barriers is just as important as knowing the path forward. In our work across manufacturing, financial services, healthcare, and retail, these challenges consistently derail AI adoption in the enterprise.

Challenge | Category | Frequency | Impact | Proven | Mitigation |

|---|---|---|---|---|---|

Poor data quality / siloed data | Data | ★★★★★ | Critical | Data catalog | + data contracts |

No governance policy | Governance | ★★★★☆ | Critical | AI governance platform | + policy-as-code |

Talent gaps | Talent | ★★★★☆ | High | Upskilling | + AI platform abstraction |

Legacy system integration | Infrastructure | ★★★★☆ | High | API-first architecture | + phased migration |

Unclear ROI | Strategy | ★★★★☆ | High | Pre-defined success metrics | before build |

AI model drift in production | Technical | ★★★☆☆ | Medium | Continuous monitoring | + auto-retraining |

Regulatory uncertainty | Compliance | ★★★☆☆ | Medium | Proactive | compliance mapping |

For a broader view of the pitfalls, read our article on the 5 biggest AI mistakes enterprises make and why 70% of AI projects fail.

The Future of AI Readiness: 2026–2030

The AI readiness landscape is shifting fast. Here are five trends that will redefine what "enterprise-ready AI" means over the next four years.

1. Responsible AI Framework and Governance Becomes Non-Negotiable

By 2028, enterprise procurement processes will require vendors to demonstrate certified responsible AI framework and governance practices similar to how SOC 2 compliance became table stakes for SaaS. Organizations building this capability now will have a significant competitive advantage.

2. AI Model Lifecycle Management Becomes Fully Automated

The manual, human-intensive process of retraining, validating, and deploying ML models is rapidly giving way to automated AI model lifecycle management platforms. By 2027, the majority of enterprise ML models will be managed by automated pipelines, with human oversight only for edge cases.

3. AutoML Democratizes AI for Non-Technical Teams

AutoML platforms are eliminating the need for expert ML engineering for a growing set of use cases. Business analysts will build and deploy predictive models directly, reducing time from business problem to AI solution from months to days.

4. Edge AI Expands Real-Time Decision-Making

As IoT devices become more powerful, AI inference is moving to the edge running on factory floor sensors, retail POS systems, and autonomous vehicles without cloud round-trips. Enterprises that invest in edge-ready AI architecture now will be positioned for this shift.

5. Explainable AI (XAI) Becomes a Regulatory Requirement

The EU AI Act already mandates explainability for high-risk AI systems. By 2030, XAI will be expected across all AI categories. The "black box" era of enterprise AI is ending. Every AI decision that affects a person, a loan approval, a medical diagnosis recommendation, a hiring filter will need to be explainable in human terms. Organizations progressing through these trends typically align with structured AI maturity model stages that define how AI capabilities evolve over time.

Conclusion: Your AI Readiness Journey Starts Now

The organizations that will lead in AI through the rest of this decade are not necessarily those with the biggest budgets or the most data scientists. They're the ones that took readiness seriously before scaling ambition.

An AI readiness framework is not a bureaucratic exercise. It's the strategic infrastructure that separates AI programs that transform businesses from AI experiments that drain them. From your data foundations to your governance structures to your regulatory compliance posture, every pillar in this guide represents a lever you can pull to increase your odds of AI success. At Samta.ai, we've helped 50+ enterprises move from AI-curious to AI-capable using exactly the frameworks, tools, and methodologies described here.

Your next step is simple.

→ Contact Our AI Strategy Team if you'd prefer to talk through your specific situation with an expert first, we're here.

About Samta

Samta.ai is an AI Product Engineering & Governance partner for enterprises building production-grade AI in regulated environments.

We help organizations move beyond PoCs by engineering explainable, audit-ready, and compliance-by-design AI systems from data to deployment.

Our enterprise AI products power real-world decision systems:

Tatva : AI-driven data intelligence for governed analytics and insights

VEDA : Explainable, audit-ready AI decisioning built for regulated use cases

Property Management AI : Predictive intelligence for real-estate pricing and portfolio decisions

Trusted across FinTech, BFSI, and enterprise AI, Samta.ai embeds AI governance, data privacy, and automated-decision compliance directly into the AI lifecycle, so teams scale AI without regulatory friction.

Enterprises using Samta.ai automate 65%+ of repetitive data and decision workflows while retaining full transparency and control.

Samta.ai provides the strategic consulting and technical engineering needed to align your human capital with your AI goals, ensuring a frictionless

Frequently Asked Questions About AI Readiness Framework

What is an AI readiness framework, and why do I need one?

An AI readiness framework is a structured methodology for evaluating and building your organization's capacity to deploy and govern AI systems safely and effectively. Organizations that skip this step fail at dramatically higher rates a failed AI project costs far more than the assessment that could have prevented it. The framework identifies gaps across data, infrastructure, governance, talent, strategy, and culture before those gaps become production failures. Think of it as a pre-flight checklist for enterprise AI.

What is the difference between AI readiness and AI maturity?

AI readiness is a point-in-time question: are you ready to start or scale a specific AI initiative right now? AI maturity is a longer arc: how sophisticated and embedded is your organization's overall AI capability over time? Readiness is project-focused. Maturity is organization-wide. You can have high AI maturity in one business unit and near-zero readiness in another and most enterprises do.

How long does an AI readiness assessment take?

A focused assessment covering one AI use case and one business unit takes 3–6 weeks with the right methodology and a dedicated cross-functional team. An enterprise-wide assessment covering all six pillars across multiple business units can take 8–16 weeks. A focused 3-week assessment that gets you to action is far more valuable than a 6-month study that results in a 200-page report nobody reads. Read our full AI Readiness Assessment guide for details.

What is the NIST AI RMF and how does it relate to an AI readiness framework?

The NIST AI RMF provides a structured approach to identifying and managing AI risks through four core functions: GOVERN, MAP, MEASURE, MANAGE. These map directly to an enterprise AI readiness framework. We recommend using NIST AI RMF as the risk management component of your broader readiness program especially if you serve US government clients or operate in regulated sectors.