Summarize this post with AI

Building an ai implementation roadmap enterprise teams can actually execute requires more than a technology selection decision. It requires a phased, time-bound plan that moves from validated pilot to governed production deployment without accumulating technical debt or organizational resistance. Most enterprise AI initiatives stall not because the technology fails, but because the roadmap was never operationalized beyond a proof of concept. This guide provides a structured 12-month framework covering pilot design, infrastructure readiness, governance integration, change management, and production scaling, built for B2B leaders, IT teams, and operations functions responsible for delivering measurable AI outcomes.

Key Takeaways

A structured ai implementation roadmap enterprise reduces the failure rate of AI initiatives by forcing phase-gate decisions before production investment is committed.

The 12-month timeline is a practical benchmark, not a constraint, reflecting the actual time required to validate data readiness, build governance controls, and achieve organizational adoption in most mid-to-large enterprises.

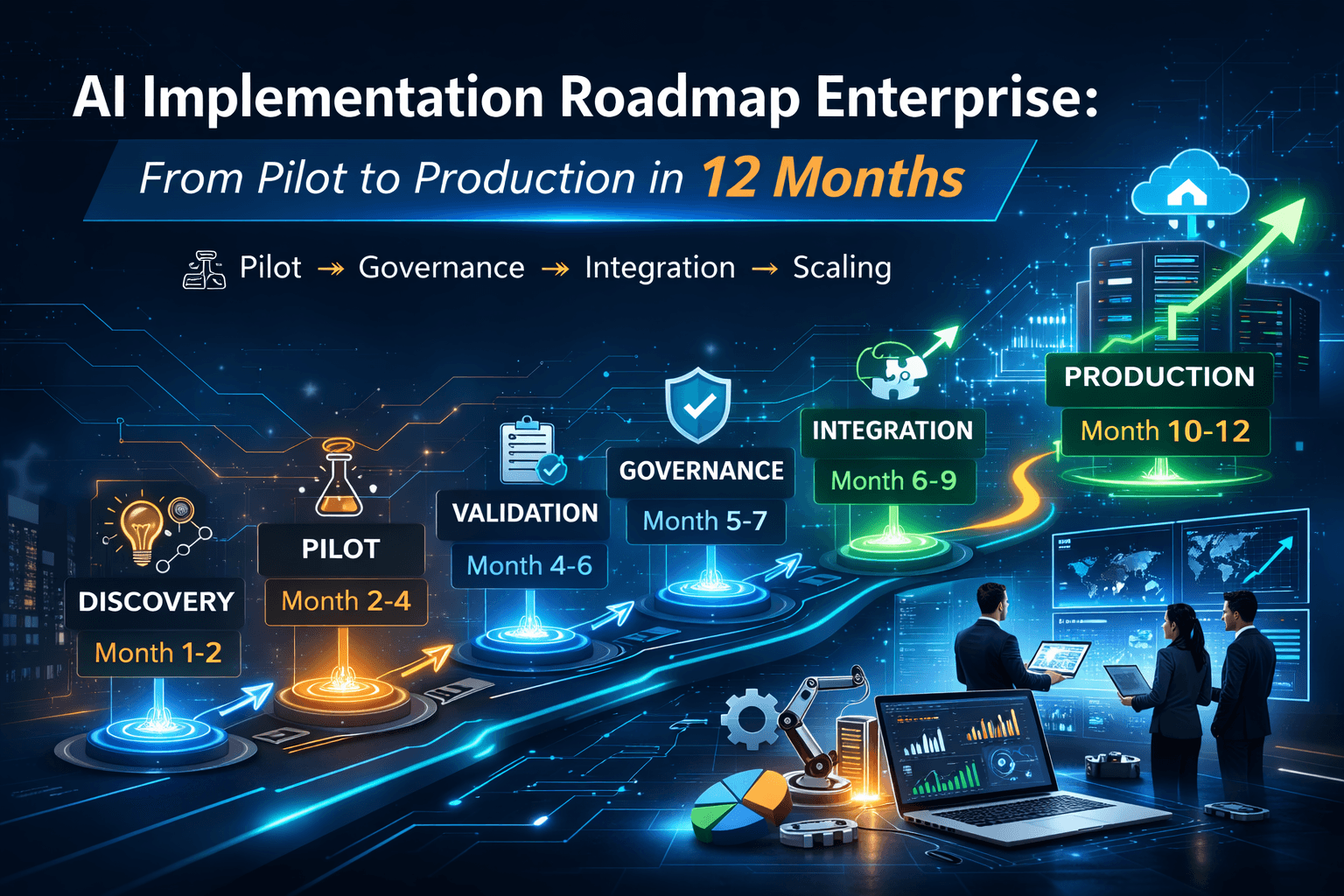

The ai implementation lifecycle has five distinct phases: Discovery, Pilot, Validation, Governance and Integration, and Production Scaling, each with defined entry and exit criteria.

AI pilot project strategy must be scoped narrowly enough to deliver a measurable outcome in 60 to 90 days. Broad pilots rarely produce actionable evidence for production decisions.

An ai governance framework must be integrated at the pilot stage, not retrofitted after production deployment. Late-stage governance is the primary cause of regulatory and compliance exposure.

Enterprise AI success correlates more strongly with data maturity, organizational change management, and executive sponsorship than with model sophistication or vendor selection.

What Does an AI Implementation Roadmap Mean for Enterprises in 2026?

An ai implementation roadmap enterprise is a structured, phase-gated plan that defines the sequence of decisions, investments, infrastructure requirements, and organizational changes needed to move an AI initiative from initial concept to a production system delivering measurable business value. In 2026, the definition has expanded beyond technical deployment. A roadmap now includes regulatory compliance checkpoints (EU AI Act, NIST AI RMF), data governance integration milestones, change management workstreams, and post-production monitoring protocols. Organizations that treat a roadmap as a purely technical delivery plan routinely underestimate the organizational complexity and governance overhead that determine whether production AI sustains value or deteriorates.

The enterprise AI landscape in 2026 is defined by three converging pressures: regulatory obligations that require documented governance before high-risk AI goes live, board-level accountability for AI-driven decisions, and a maturing vendor ecosystem that makes tooling selection more consequential than it was in earlier adoption cycles. The ai deployment roadmap differs from a standard software implementation plan in one critical dimension: model performance is not static. Unlike a deployed application, a production AI model degrades over time as data distributions shift. The roadmap must account for ongoing monitoring, retraining cycles, and model lifecycle management, not just initial go-live.

The ai implementation lifecycle connects six operational domains: use case prioritization, data readiness assessment, model development and validation, infrastructure provisioning, governance and compliance integration, and organizational adoption. A roadmap without all six is incomplete and will encounter preventable failures in the domains that were skipped. For a foundational understanding of how AI governance integrates into the implementation lifecycle, see Agentic AI Governance Framework and The Future of AI Governance, both published by the Samta.ai research team.

The 12-Month AI Implementation Roadmap: Phase-by-Phase Breakdown

The table below maps each phase of a structured enterprise AI implementation roadmap to its timeline, objectives, key activities, exit criteria, and the Samta.ai product that supports each phase. The VEDA platform appears first as the primary product reference.

Phase | Timeline | Objective | Key Activities | Exit Criteria | Samta.ai Product |

VEDA Platform by Samta.ai | Full 12 months | End-to-end AI implementation visibility: data pipeline monitoring, model performance tracking, drift detection, and governed production analytics across all phases | Pipeline observability, model registry, drift alerts, performance dashboards, retraining triggers, governance audit logs | Measurable production outcome with documented performance baseline and monitored KPIs | |

Phase 1: Discovery and Use Case Prioritization | Month 1 to 2 | Identify the highest-value, lowest-risk AI use case with sufficient data availability | Business process audit, data maturity assessment, stakeholder interviews, ROI estimation, risk tier classification | Single prioritized use case with defined success metrics and data availability confirmed | VEDA: Data readiness assessment dashboard |

Phase 2: Pilot Design and Execution | Month 2 to 4 | Build and test a minimum viable model against real business data in a controlled environment | Data pipeline construction, baseline model development, feature engineering, initial bias testing, pilot environment setup | Model achieves baseline performance threshold. Pilot success criteria met within 60 to 90 days | VEDA: Experiment tracking and pipeline monitoring |

Phase 3: Validation and Business Case | Month 4 to 6 | Validate model performance against production-representative data and build financial and operational business case for scale | Holdout dataset testing, stakeholder review, ROI modeling, performance benchmarking vs baseline, independent model challenge | Validated performance metrics. Signed-off business case. Production approval from governance committee | VEDA: Performance benchmarking reports |

Phase 4: Governance and Compliance Integration | Month 5 to 7 | Integrate AI governance controls, risk documentation, and regulatory compliance requirements before production deployment | Model documentation (Model Card), bias audit, explainability outputs (SHAP/LIME), regulatory mapping, human oversight protocol, incident response design | Completed governance documentation. Compliance sign-off. Model registered in enterprise AI inventory | VEDA: Governance audit logs and model registry |

Phase 5: Infrastructure and Integration | Month 6 to 9 | Deploy model to production infrastructure and integrate with existing enterprise systems (ERP, CRM, data warehouse) | MLOps pipeline setup, API integration, CI/CD for model retraining, security review, performance load testing, data pipeline productionization | Model live in production environment. Integration tests passed. Monitoring dashboards operational | VEDA: Production monitoring and alerting |

Phase 6: Change Management and Adoption | Month 7 to 10 | Drive organizational adoption, train end users, and establish feedback loops between users and model owners | Training program delivery, SOPs updated, user acceptance testing, feedback mechanism design, champion network activation | Adoption rate milestone achieved. User feedback integrated. Model owner accountability confirmed | VEDA: User adoption and feedback tracking |

Phase 7: Production Monitoring and Scaling | Month 10 to 12 | Operate model in production with continuous monitoring and evaluate expansion to adjacent use cases | Drift monitoring, performance reporting, retraining cadence established, expansion use case assessment, lessons-learned documentation | 90-day production stability report. Retraining protocol documented. Expansion roadmap drafted | VEDA: Drift detection, retraining triggers, scaling dashboard |

The timeline ranges overlap intentionally. Governance integration and infrastructure work run in parallel with later validation phases in well-resourced implementations.

Practical Use Cases: Where the 12-Month AI Implementation Roadmap Delivers Results

The following use cases represent enterprise scenarios where a structured ai implementation roadmap enterprise has been applied to move from pilot to governed production within a 12-month window. Each scenario illustrates a distinct implementation pattern, risk profile, and organizational consideration.

1. Financial Services: Credit Risk Model Replacement

A regional bank replaces a rules-based credit scoring system with an ML model. Phase 1 involves auditing the existing decision logic and mapping the training data to regulatory fair lending requirements. The pilot runs on a holdout dataset of historical applications. Validation requires independent model challenge by the risk management team. Governance integration is the most time-intensive phase. Adverse action explainability, and bias auditing across protected classes must be documented before any production approval. Infrastructure integration involves the loan origination system. The change management workstream trains underwriters on model outputs and override protocols. Full production stability is typically achieved by month 10 to 12.

Key complexity driver: Regulatory documentation and independent model validation cannot be compressed below 8 to 10 weeks in this sector regardless of model readiness.

2. Healthcare: Clinical Decision Support Tool

A hospital network deploys a machine learning model to prioritize high-risk patient outreach for chronic disease management. Discovery focuses on EHR data quality and HIPAA compliance scope. The pilot operates on a single ward or patient cohort to contain risk. Validation includes clinical workflow integration testing and clinician review of model recommendations. Governance integration requires FDA SaMD framework alignment and a Predetermined Change Control Plan if the model will be updated post-deployment. Change management is the single highest-effort phase. Clinical staff adoption requires structured training, evidence of model performance, and clear escalation protocols. Production monitoring must include outcome tracking against clinical KPIs, not just model performance metrics.

Key complexity driver: Clinical workflow integration and staff adoption consistently take longer than the model development itself.

3. Retail and E-Commerce: Demand Forecasting Replacement

An enterprise retailer replaces spreadsheet-based demand planning with an ML forecasting model. The pilot is scoped to a single product category and distribution region to limit blast radius. Data readiness assessment reveals SKU-level historical data gaps that require 4 to 6 weeks of remediation before model training can begin. This is a common finding that pushes timelines if not identified in Phase 1. Infrastructure integration with the ERP system is the primary technical complexity. Governance requirements are lower than financial or healthcare use cases, allowing faster progression from validation to production. The 12-month roadmap typically achieves full deployment across product categories by month 10, with the remaining two months establishing monitoring and retraining protocols.

Key complexity driver: Data quality remediation at scale is the primary timeline risk in this sector.

4. HR and Talent Operations: Attrition Prediction Model

An enterprise HR team deploys a model to identify employees at high risk of voluntary attrition, enabling proactive retention interventions. Discovery surfaces GDPR and CCPA compliance obligations around employee data processing. The pilot is scoped to a single business unit. Governance integration must address employee data consent, model transparency obligations, and restrictions on automated HR decision-making under GDPR Article 22. Change management is particularly sensitive. HR leadership and employee relations teams must be engaged early to prevent organizational resistance to algorithmic tools in people management. Adoption requires manager training on how to use model outputs as one input, not a deterministic decision.

Key complexity driver: Legal and ethical complexity of employee data processing frequently extends Phase 4 beyond initial estimates.

5. Manufacturing: Predictive Maintenance for Industrial Equipment

A manufacturing operation deploys a predictive maintenance model on high-value production equipment to reduce unplanned downtime. Discovery assesses sensor data availability, sampling frequency, and historical failure event labeling. Pilot scope is a single production line or equipment class. Infrastructure integration with SCADA/MES systems and IoT data pipelines is the primary technical challenge. Governance requirements are operational rather than regulatory. Documentation focuses on model override protocols, maintenance team training, and fallback procedures if the model generates false positive maintenance triggers. Production scaling involves rollout across additional equipment classes based on pilot performance evidence.

Key complexity driver: IoT data pipeline reliability and sensor data quality are the most common technical blockers in this sector.

For a structured approach to managing the organizational complexity that runs through all five of these scenarios, see AI Change Management Strategy and How to Measure AI, both from the Samta.ai knowledge library.

Moving from Pilot to Production in 12 Months Starts with the Right Partner

Samta.ai has the AI, ML, and data engineering expertise to design and execute your enterprise AI implementation roadmap from use case prioritization to governed production deployment. Book a Demo with Samta.ai →

Limitations and Risks: What the 12-Month Roadmap Does Not Guarantee

A structured implementation roadmap reduces risk. It does not eliminate it. The following limitations are the most frequently encountered constraints that extend timelines, increase costs, or cause initiative failure.

Data Readiness Is Consistently Underestimated

The single most common cause of pilot failure and timeline extension is data quality issues discovered after the pilot begins. Historical data gaps, inconsistent labeling, undocumented schema changes, and access control delays are not visible until a team actually attempts to build a training pipeline. Phase 1 data readiness assessment must be treated as a hard prerequisite, not a parallel workstream.Organizational Readiness Lags Technical Readiness

A model can be technically production-ready while simultaneously facing organizational blockers: teams unwilling to act on model outputs, managers who override the model systematically, or end users who never received adequate training. Technical delivery timelines and adoption timelines are not the same and must be planned and measured separately.The 12-Month Timeline Assumes Adequate Resourcing

Organizations that assign part-time resources to AI implementation projects, treating them as a side project alongside existing responsibilities, routinely experience 2x to 3x timeline overruns. The 12-month benchmark assumes dedicated data engineering, ML engineering, and project management capacity throughout the lifecycle.Governance Integration Cannot Be Compressed in Regulated Sectors

Financial services, healthcare, and public sector deployments have minimum governance lead times driven by regulatory requirements, independent review processes, and procurement cycles that are outside the implementing team's control. A 12-month roadmap in these sectors requires governance work to begin in Month 1, not Month 5.Model Performance Degrades in Production

A model validated at Month 5 is operating on data from a specific time window. Production data distributions shift due to seasonality, market changes, and behavioral changes, and models degrade without active monitoring and retraining. Organizations that do not build monitoring and retraining into the roadmap will see performance erosion within 6 to 12 months of go-live.Third-Party and Cloud Vendor Dependencies Introduce Uncontrolled Risk

Enterprise AI implementations that depend on third-party foundation models, cloud ML APIs, or external data providers are exposed to vendor pricing changes, API deprecation, data access restrictions, and model updates that alter downstream behavior without notice. Vendor dependency risk must be documented and mitigated during Phase 1.Decision Framework: When to Execute a Formal 12-Month AI Implementation Roadmap

Not every AI initiative requires a full 12-month enterprise implementation roadmap. Applying the full framework to a low-stakes internal automation tool creates overhead without proportionate value. Conversely, treating a high-stakes customer-facing AI deployment as an informal project creates unacceptable risk. Use the criteria below to determine the appropriate implementation approach.

Decision Criterion | Full 12-Month Roadmap | Lightweight Deployment (under 6 months) | Defer or Descope |

Risk Tier | High-risk (regulated sector, customer-facing, automated decisions) | Medium-risk (internal tool, human-reviewed outputs) | Undefined or no measurable business case |

Data Readiness | Level 3 or above data governance maturity confirmed | Level 2 with targeted remediation feasible | Level 1. Data foundations not in place |

Regulatory Exposure | EU AI Act, SR 11-7, HIPAA, GDPR Art. 22 applicable | Minimal or no regulatory obligations | Regulatory scope unknown |

Organizational Readiness | Dedicated team, executive sponsor, change management budget | Part-time team, moderate stakeholder alignment | No executive sponsor, no adoption plan |

Business Case Clarity | Defined ROI target, measurable KPIs, approved budget | Directional value estimate, exploratory mandate | No success metrics defined |

Infrastructure Readiness | MLOps tooling available or planned, integration path defined | Basic integration feasible, manual steps acceptable | Core data infrastructure not operational |

When to execute the full roadmap: The organization is deploying AI into a regulated or customer-facing context, the use case has defined financial stakes, and governance obligations exist. Budget and dedicated resourcing are confirmed.

When a lighter approach is appropriate: The use case is internal, low-risk, and the team has prior AI deployment experience. The pilot can deliver value without enterprise-grade governance overhead.

When to defer: Data foundations are not in place, no executive sponsor exists, or the business case cannot be articulated with measurable outcomes. Deploying AI without these foundations produces technical artifacts, not business value.

For a practical assessment of the regulatory obligations that determine roadmap complexity, see Regulatory Compliance for AI on the Samta.ai blog. To understand the most common decision points where enterprise AI initiatives fail, The 5 Biggest AI Mistakes provides direct, evidence-based guidance.

Conclusion: The Roadmap Is the Risk Mitigation Strategy

Enterprise AI initiatives fail in predictable ways, not because the models are wrong, but because the path from pilot to production was never built with enough structural rigor to survive contact with organizational reality. Data gaps surface mid-pilot. Governance requirements appear after the model is built. Change management is treated as a launch announcement rather than a six-month workstream. A well-constructed ai implementation roadmap enterprise makes these failure modes visible early enough to manage them, before the next investment is committed. For the most common points where enterprise AI programs break down, see The 5 Biggest AI Mistakes on the Samta.ai blog.

Samta.ai brings full-lifecycle expertise to enterprise AI programs, covering data engineering, model development, MLOps infrastructure, and production monitoring through the VEDA platform. With deep specialization in AI, machine learning, and enterprise data engineering, Samta.ai structures implementation around measurable outcomes, not just technical deliverables, helping B2B teams close the gap between pilot and production with the precision that regulated, high-stakes deployments require.

Ready to Build Your 12-Month AI Implementation Roadmap?

Samta.ai's AI and ML experts help enterprise teams move from pilot to governed production, on time, on budget, and with measurable outcomes built in from day one. Book a Demo with Samta.ai →

About Samta

Samta.ai is an AI Product Engineering & Governance partner for enterprises building production-grade AI in regulated environments.

We help organizations move beyond PoCs by engineering explainable, audit-ready, and compliance-by-design AI systems from data to deployment.

Our enterprise AI products power real-world decision systems:

Tatva : AI-driven data intelligence for governed analytics and insights

VEDA : Explainable, audit-ready AI decisioning built for regulated use cases

Property Management AI : Predictive intelligence for real-estate pricing and portfolio decisions

Trusted across FinTech, BFSI, and enterprise AI, Samta.ai embeds AI governance, data privacy, and automated-decision compliance directly into the AI lifecycle, so teams scale AI without regulatory friction.

Enterprises using Samta.ai automate 65%+ of repetitive data and decision workflows while retaining full transparency and control.

FAQs: AI Implementation Roadmap Enterprise

What is an AI implementation roadmap for enterprises and how long does it take?

An ai implementation roadmap enterprise is a phase-gated plan that moves an AI initiative from use case identification through pilot, validation, governance integration, and production deployment. For most mid-to-large enterprises, 10 to 14 months is a realistic timeline for a single high-risk use case. The 12-month benchmark assumes adequate resourcing, pre-existing data infrastructure at Level 3 maturity, and governance work beginning in the first phase, not after validation.What are the most common reasons enterprise AI pilots fail to reach production?

The primary causes are data quality issues discovered after pilot start, insufficient governance preparation for regulated deployments, organizational resistance to acting on model outputs, and lack of executive sponsorship to resource change management. Technical model failure is a less frequent cause than these organizational and data-related factors. See The Intersection of AI for a detailed breakdown of how organizational and technical factors intersect in failed deployments.When should governance integration begin in the AI implementation lifecycle? Governance integration should begin in Phase 1 of the implementation lifecycle during discovery and use case prioritization, not after the model is built. The risk tier of the use case determines the governance requirements that constrain the subsequent phases. Discovering a regulatory obligation at Phase 4 that was not accounted for in Phase 1 is one of the most costly timeline risks in enterprise AI deployment.

What infrastructure is required before beginning a 12-month AI implementation roadmap?

Minimum infrastructure requirements include a governed data pipeline capable of delivering clean, labeled training data; a version-controlled model development environment; an MLOps tooling stack for experiment tracking and model registry; integration pathway documentation for target production systems; and a monitoring solution for post-deployment performance tracking. The VEDA analytics platform from Samta.ai addresses the monitoring and pipeline visibility requirements across the full implementation lifecycle.