Summarize this post with AI

What if your AI didn't just answer questions but actually completed complex, multi-step tasks on your behalf, adapting in real time, calling the right tools, and knowing when to ask for human guidance? That's the promise of Agentic AI Engineering Architecture & Governance and in 2026, it's no longer just a promise. According to industry research, over 67% of enterprise AI projects that failed in 2023–2024 did so not because the models were bad, but because the surrounding architecture, context management, and governance frameworks were absent. In this guide, we walk you through everything from what agentic systems actually are, to how to build and govern them responsibly. Whether you're a business decision-maker, a data engineer, or someone exploring agentic AI systems engineering applications, you'll find actionable insights here.

What Is Agentic AI Engineering Architecture & Governance?

Agentic AI refers to AI systems that can plan, reason, use tools, and take multi-step actions to achieve a goal with minimal human hand-holding at each step. Unlike a chatbot that responds to a single prompt, an AI agent loops: it observes its environment, decides on an action, executes it, and then reassesses.

Architecture in this context means the structural design of how these agents are built how they receive context, which tools they can call, how memory is managed, and how multiple agents coordinate. Governance is the set of policies, guardrails, audit mechanisms, and accountability structures that ensure these systems operate safely and reliably.

Key Distinction: Agentic AI vs Generative AI: Generative AI produces content from a single input. Agentic AI pursues a goal over many steps, using tools like web search, code execution, or database queries along the way. Think of generative AI as a brilliant writer and agentic AI as a brilliant project manager who also writes.

Governance matters enormously here. Without it, an agent operating in a production environment say, one that can send emails, write to databases, or trigger API calls becomes a liability. Proper agentic AI governance includes access control, rate limiting, human-in-the-loop checkpoints, and full audit trails. To understand how leading organizations are structuring this, read our global AI governance framework.

The Evolution: From Prompt Engineering to Agentic Systems

2020–2022: The Prompt Engineering Era

The conversation started with prompt engineering the craft of writing better instructions to get better outputs from language models. It was powerful, but fundamentally reactive: one input, one output.

2023–2024: The Rise of AI Agents

With GPT-4, Claude, and open-source models reaching new capability thresholds, developers began chaining prompts into workflows. AutoGPT, LangChain, and CrewAI brought the concept of AI agents for data engineering and task automation into production environments. But hallucination, context loss, and lack of governance led to high failure rates.

2025–2026: Agentic AI Systems Engineering

Today, the discipline has matured into a proper engineering practice. Teams now design context windows deliberately, implement memory tiers, build multi-agent orchestration, and treat governance as a first-class architectural concern not an afterthought. For a foundational understanding of what this requires at the infrastructure level, see our guide on building an AI-ready data stack.

Industry Stat: The global AI agents market is projected to reach $47 billion by 2030, growing at 44% CAGR. Full report

Agentic AI vs Prompt Engineering vs Generative AI

Dimension | Prompt Engineering | Generative AI | Agentic AI | Notes / Explanation | Impact Level |

|---|---|---|---|---|---|

Task scope | Single-turn | Single/multi-turn | Multi-step, goal-driven | Defines how complex tasks each approach can handle | High |

Tool use | ✗ | ✗ (mostly) | ✓ Native | Ability to interact with external systems (APIs, DBs, tools) | High |

Memory | ✗ | Short-term only | ✓ Short + long-term | Determines continuity and learning across interactions | High |

Planning | ✗ | ✗ | ✓ Explicit reasoning | Ability to break down tasks into steps and execute logically | Very High |

Governance needs | Low | Medium | High (critical) | Required level of control, audit, and compliance | Critical |

Engineering complexity | Low | Medium | High | Effort required to design and deploy systems | High |

ROI potential | Moderate | High | Very high | Business value and automation potential | Very High |

Free AI Assessment Report →

Evaluate your AI systems for risks, compliance gaps, and governance readiness in minutes.

Core Components of an Agentic AI System

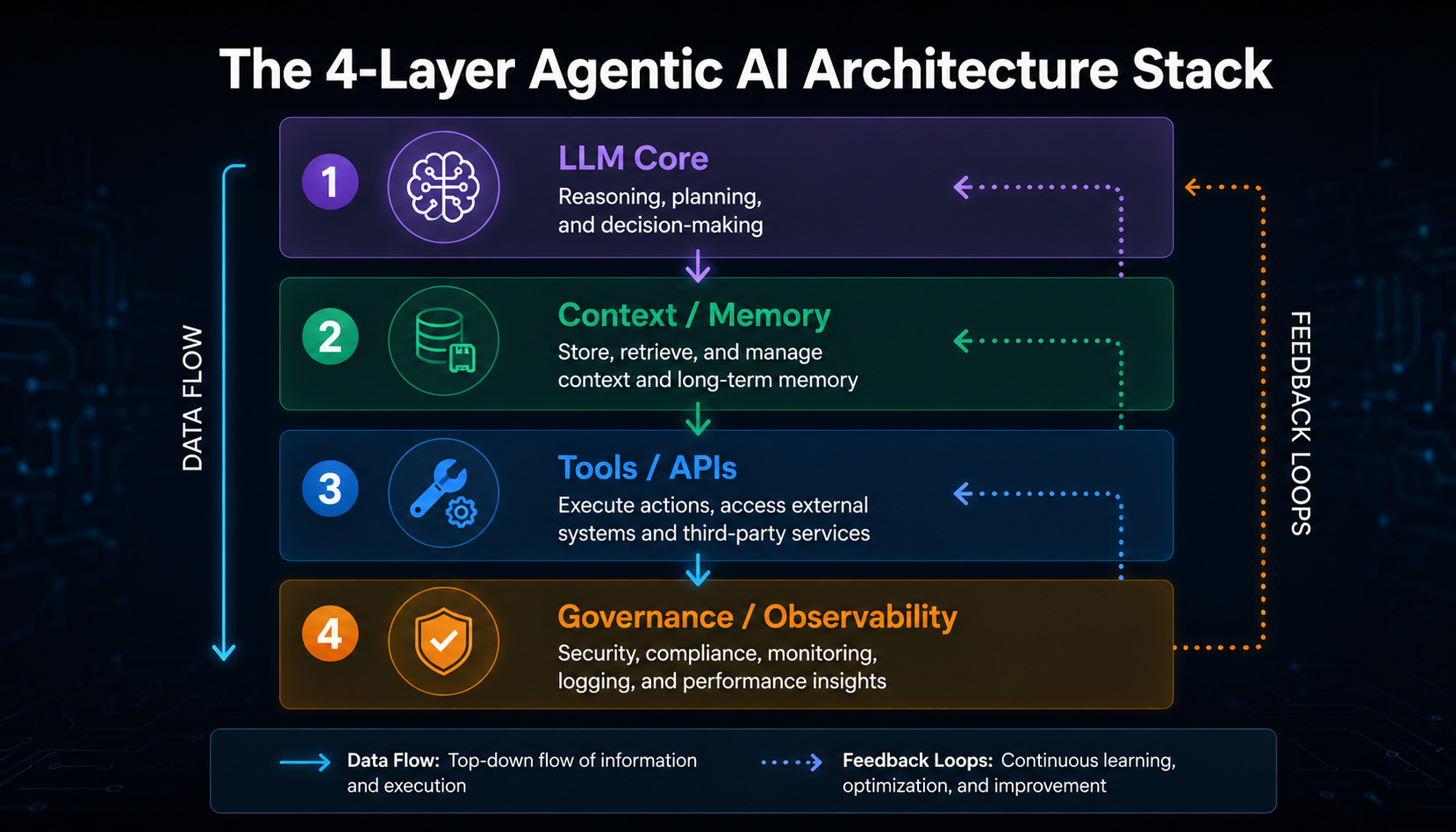

Building a reliable agentic system isn't about plugging in an LLM and hoping for the best. It requires four tightly integrated layers:

1. The Agent Brain (LLM Core)

The large language model at the center GPT-4o, Claude 3.5, Gemini 1.5 acts as the reasoning engine. It interprets goals, decides which tool to call next, and synthesizes outputs. Choosing the right model significantly impacts both performance and cost. See how our Veda AI analytics platform handles model selection and orchestration at enterprise scale.

2. Context & Memory Management

This is where most agentic projects succeed or fail. Agents need working memory (what's happening right now), episodic memory (what happened in past sessions), and semantic memory (domain knowledge). Managing what goes into the context window and what doesn't is the art of context engineering for AI agents. Getting this right requires a properly structured AI-ready data engineering foundation underneath.

3. Tool & API Orchestration

Agents derive their power from the tools they can use: web search, SQL queries, Python execution, REST API calls, file manipulation. Each tool integration requires careful permission scoping an agent shouldn't have more access than the specific task requires. Our data integration consulting services help teams design these integrations correctly from the start.

4. Governance & Observability Layer

Every agent action should be logged. Human-in-the-loop checkpoints should be defined for high-stakes decisions. Rate limits, budget caps, and rollback mechanisms prevent runaway agents. This is precisely what our AI security and compliance services are designed to support.

Context Engineering for AI Agents: Lessons from the Field

Context engineering is fast becoming one of the most critical skills in agentic AI development. The term, popularized through work on building systems like Manus (a fully autonomous AI agent), describes the deliberate design of what information an agent sees and crucially, what it doesn't.

Why Context Engineering Matters More Than Prompting

In our work with 50+ enterprise clients, we've found that agents fail not because they lack intelligence, but because they're overloaded with irrelevant information or starved of critical context at the wrong moment. A well-engineered context window is like a well-briefed employee: they have what they need, when they need it nothing more, nothing less.

Pro Tip: The 3-Layer Context Principle: Structure your agent's context into (1) a stable system prompt with role + rules, (2) a dynamic scratchpad for the current task, and (3) retrieved memory snippets from past sessions. Keeping these layers separate prevents context pollution and dramatically improves task performance.

Key Lessons from Building Production Agentic Systems

Compression beats completeness. Summarize past steps rather than re-including raw transcripts. A 200-token summary of the last 5 steps outperforms a 2,000-token verbatim log.

Tool outputs need filtering. When an agent calls a database and gets 500 rows, only the relevant 10 rows should enter the context. Pre-filter before injection.

Failure modes are context failures. Most agent loops that "go in circles" do so because the context doesn't clearly communicate what has already been attempted.

System prompts are contracts. Treat your system prompt as a legal document — precise, unambiguous, and version-controlled.

The quality of your context engineering is directly tied to the quality of your underlying data pipelines. Read our detailed guide on AI-ready data engineering infrastructure to understand what needs to be in place before you build.

Download the Agentic AI Governance Checklist A practical, 40-point checklist covering architecture decisions, context management, governance policies, and compliance requirements built from our experience with 50+ enterprise deployments.

The 7-Step Framework to Build & Govern AI Agents

Based on our experience deploying agentic AI systems engineering applications across industries, we've developed a framework that balances speed with safety. Here's the full playbook:

Step 1: Define the Goal & Scope Boundary

Before touching any code, write a one-page "Agent Charter" — exactly what this agent is allowed to do, what it must escalate, and what data it can access.

Tools used: Notion, Confluence, internal wikis.

Pro Tip: Start with a single, well-bounded task. A "research assistant" agent that searches the web and summarizes findings is far easier to govern than a "general assistant" with open-ended permissions.

Step 2: Design the Context Architecture

Map out your memory tiers: what goes in the system prompt, what is retrieved from a vector database, and what is generated dynamically.

Tools used: Pinecone, Weaviate, pgvector, Redis.

Challenge: Balancing context richness with token cost.

Best practice: Use semantic similarity to retrieve only the 3–5 most relevant memory chunks per agent step. This is much easier when your data infrastructure is already structured for AI readiness.

Step 3: Build & Scope Tool Integrations

Each tool the agent can use should be wrapped in a typed interface with clear documentation, input validation, and error handling.

Tools used: LangChain, LlamaIndex, OpenAI Function Calling, Anthropic Tool Use.

Real-world example: A data engineering agent that can query a warehouse should receive read-only credentials scoped to specific schemas never admin access. Our data integration consulting team helps enterprises set these permission boundaries correctly across complex data environments.

Step 4: Implement the Reasoning Loop

Choose your agent pattern: ReAct (Reason + Act), Plan-and-Execute, or multi-agent collaboration. Each pattern has different strengths.

Tools used: LangGraph, AutoGen, CrewAI, custom loop implementations.

Best practice: Add a "max steps" hard limit to every agent loop. Without it, runaway loops can burn thousands of tokens and dollars before anyone notices.

Step 5: Integrate Human-in-the-Loop Checkpoints

Identify the decision points where the agent must pause and request human approval typically before any irreversible action (sending emails, deleting data, making API calls to external systems).

Tools used: Slack webhooks, approval workflow tools, custom UI dashboards.

This is a core pillar of responsible AI deployment. Our global AI governance framework outlines exactly how to design these checkpoints across different risk tiers.

Step 6: Deploy Observability & Monitoring

Every agent action every LLM call, every tool invocation, every output should be logged with full traceability. This is essential for debugging, auditing, and catching AI model drift before it compounds into larger failures.

Tools used: LangSmith, Arize AI, Weights & Biases, custom logging pipelines.

KPIs to track: Task success rate, average steps per completion, cost per task, human escalation rate.

Step 7: Establish the Governance Lifecycle

An agent deployed is an agent that needs ongoing care. Define your AI model lifecycle management process: regular performance reviews, policy updates as regulations change, model version control, and deprecation planning.

Governance checklist includes: Access review cadence, bias audits, compliance

Top AI Engines & Tools for Agentic Functions

A common question we get: "What are the top AI engines for agentic functions?" Here's our honest breakdown based on production deployments:

Tool / Framework | Best For | Maturity | Open Source | Governance Features | Typical Use Case |

|---|---|---|---|---|---|

Enterprise data + agentic analytics | High | ✗ | Enterprise-grade | Large-scale governed AI analytics in enterprises | |

LangGraph | Complex multi-agent workflows | High | ✓ | Good | Building structured multi-agent systems with control flows |

AutoGen (Microsoft) | Multi-agent conversations | High | ✓ | Moderate | Collaborative AI agents communicating with each other |

LlamaIndex | RAG + agentic data retrieval | High | ✓ | Good | Data-driven agents with retrieval from databases |

CrewAI | Role-based agent teams | Medium | ✓ | Basic | Simulating teams of AI agents with defined roles |

OpenAI Assistants API | Quick agent prototyping | High | ✗ | Limited | Fast development of simple AI agents |

Anthropic Tool Use | Safe, reliable tool calling | High | ✗ | Strong | Secure tool execution with controlled outputs |

For teams choosing an AI agent for data analysis, the right choice depends on your data infrastructure. If you're heavily embedded in cloud data warehouses (Snowflake, BigQuery, Redshift), LlamaIndex with a SQL agent pattern delivers excellent results. Not sure which fits your stack? Our data integration consulting specialists can map the right tools to your architecture.

Real-World Agentic AI Applications by Industry

Wondering how to implement agentic AI in your specific domain? Here are four detailed examples from deployments we've studied closely:

Healthcare: Clinical Documentation Agent

Problem: Physicians spend 3+ hours/day on EHR documentation.

Solution: An agentic system that listens to patient consultations, retrieves relevant patient history from the EHR, drafts clinical notes, and flags abnormal values for physician review.

Implementation: Whisper API for transcription → agent with EHR tool integration → human approval before record commit.

Result: ↓ 68% documentation time

Finance: Fraud Detection Agent

Problem: Rule-based fraud systems miss sophisticated, novel fraud patterns.

Solution: A multi-step agent that, upon flagging a transaction, autonomously queries transaction history, checks against known fraud patterns, cross-references external threat intelligence, and produces a risk report with a recommendation. The hidden risks of letting such systems run without guardrails are explored in depth in our article on the hidden risks of ungoverned AI.

Human checkpoints: Final approve/block decision stays with humans.

Result: ↑ 41% fraud catch rate

Manufacturing: Predictive Maintenance Agent

Problem: Equipment failures cause unplanned downtime costing $260K/hour. Solution: An AI agent continuously monitors IoT sensor streams, queries maintenance logs when anomalies are detected, runs failure probability models, and automatically schedules maintenance windows notifying engineers with a full diagnostic report. This kind of system depends on robust AI model lifecycle management to stay accurate as equipment conditions evolve.

Result: ↓ 35% unplanned downtime

E-commerce: Cart Recovery Agent

Problem: 70% of carts are abandoned. Generic email blasts recover under 5%.

Solution: An agent that analyzes each abandonment event checking browsing history, past purchases, and discount eligibility then crafts a personalized recovery message, selects the optimal send time, and A/B tests subject lines. All within a governance framework that caps discount depth to protect margins.

Result: ↑ 23% recovery rate

Challenges & How to Overcome Them

Implementing agentic AI is not without its obstacles. Here's what to anticipate and how to address each challenge head-on:

Context drift and hallucination: As agent conversations lengthen, models can "forget" earlier constraints. Mitigation: Implement periodic context compression and re-injection of core system rules every N steps.

Tool reliability and error cascades: One failed API call can derail an entire agent workflow. Mitigation: Build explicit error-handling branches and fallback behaviors into every tool wrapper.

Security and prompt injection: Malicious content in tool outputs can hijack agent behavior. Mitigation: Sanitize all tool outputs before injecting into the context; implement content classifiers as a governance layer. See the OWASP LLM Top 10 for a comprehensive checklist of LLM-specific security risks.

Cost management: Agents can rack up substantial LLM API costs. Mitigation: Set per-task token budgets, use smaller models for sub-tasks, and cache frequent tool outputs.

Regulatory compliance: GDPR, HIPAA, and the EU AI Act impose strict requirements on automated decision-making. Mitigation: Design your AI security and compliance architecture to meet regulatory requirements from day one retrofitting is far more expensive and risky.

Talent gap: The skills required LLM engineering, systems design, data engineering, and governance rarely coexist in one person. Mitigation: Build cross-functional teams and invest in structured upskilling programs.

Pro Tip: Shadow Mode Deployment: Run your agent in parallel with existing human workflows for 2–4 weeks before giving it any live authority. Compare its decisions to human decisions to calibrate trust before granting real agency.

The Future of Agentic AI Engineering (2026–2030)

The field is moving fast. Here's what the next four years look like, based on current research trajectories and early enterprise signals:

Multi-agent societies (2026–2027): Instead of one agent doing everything, organizations will deploy specialized agent teams a researcher, a writer, a validator, a deployer coordinated by an orchestrator agent. This mirrors how effective human teams work.

Standardized governance protocols (2027): The industry desperately needs common standards for agent identity, capability declarations, and audit logging. Expect W3C-style standards bodies to emerge around agent governance in the next 18 months. Businesses that have already implemented a solid global AI governance framework will be best positioned to adapt quickly.

Agentic AI and edge computing (2028): Lighter agentic models running on-device will enable real-time, privacy-preserving agents in healthcare devices, manufacturing equipment, and consumer electronics.

Will agentic AI replace software engineers? The honest answer: it will replace certain categories of repetitive coding tasks, while dramatically amplifying the productivity of engineers who understand how to architect and govern agentic systems. The most valuable engineers in 2028 will be those who can design, oversee, and debug AI agent networks not those who fear them.

Conclusion

Agentic AI is not a future trend it's a present-tense engineering discipline. The organizations that invest now in understanding the architecture, mastering context engineering, and building governance frameworks will have a decisive competitive advantage through the rest of this decade. The patterns are clear: start small, govern from day one, measure rigorously, and scale what works. The biggest mistake we see is treating governance as a compliance checkbox rather than an architectural foundation. Build it in from the start, and your agents will earn and keep the trust of both your users and your regulators. Whether you're exploring your first AI agent for data analysis or designing a multi-agent system for enterprise operations, the framework, tools, and governance principles in this guide give you a solid foundation to build responsibly and effectively.

Ready to Build Your First AI Agent?

Our team has helped 50+ companies design, deploy, and govern agentic AI systems that actually work in production. Let's start with your use case. Schedule a Free Consultation →

About Samta

Samta.ai is an AI Product Engineering & Governance partner for enterprises building production-grade AI in regulated environments.

We help organizations move beyond PoCs by engineering explainable, audit-ready, and compliance-by-design AI systems from data to deployment.

Our enterprise AI products power real-world decision systems:

TATVA : AI-driven data intelligence for governed analytics and insights

VEDA : Explainable, audit-ready AI decisioning built for regulated use cases

Property Management AI : Predictive intelligence for real-estate pricing and portfolio decisions

Trusted across FinTech, BFSI, and enterprise AI, Samta.ai embeds AI governance, data privacy, and automated-decision compliance directly into the AI lifecycle, so teams scale AI without regulatory friction.

Enterprises using Samta.ai automate 65%+ of repetitive data and decision workflows while retaining full transparency and control.

Samta.ai provides the strategic consulting and technical engineering needed to align your human capital with your AI goals, ensuring a frictionless.

Frequently Asked Questions

What is agentic AI, and how does it differ from standard AI chatbots?

Standard AI chatbots respond to a single message with a single response. Agentic AI systems, by contrast, pursue multi-step goals autonomously they can plan sequences of actions, call external tools (like databases or APIs), store and retrieve memory, and adapt their approach based on intermediate results. Think of a chatbot as a one-question consultant and an AI agent as an autonomous analyst who can execute a full research project from start to finish. For a deeper look at the infrastructure that powers this, see our Veda AI platform overview.

Will AI agents replace software engineers?

Not in the near term but the role will evolve significantly. AI agents will automate repetitive coding tasks (writing boilerplate, generating tests, debugging known error patterns), freeing engineers to focus on system architecture, product thinking, and increasingly designing and governing the AI agent systems themselves. Engineers who understand how to build and oversee agentic systems will be among the most sought-after professionals in the 2026–2030 job market.

How do I choose the right AI agent for data engineering workflows?

Start by mapping the workflow you want to automate: what tools does it require (SQL, Python, dbt, Airflow)? What data does it access, and how sensitive is it? What human approval points do you need? Once you have that map, evaluate agent frameworks by their tool integration ecosystem, memory management, and governance controls. For most enterprise data engineering use cases, LangGraph or LlamaIndex with a SQL agent pattern is a solid starting point. Our data integration consulting team can help you map the right tools to your specific stack.

How long does it take to implement a production-ready AI agent?

For a well-scoped, single-purpose agent (e.g., a data summarization agent or a customer query router), expect 4–8 weeks from design to production-ready deployment. This includes 1–2 weeks for architecture design, 2–3 weeks for development and testing, and 1–2 weeks for governance setup and shadow-mode validation. Multi-agent systems with complex orchestration typically require 3–6 months. The governance layer accounts for about 30% of implementation time when done properly.

What governance frameworks apply to agentic AI in regulated industries?

In regulated industries (healthcare, finance, legal), agentic AI deployments must address several compliance layers: GDPR or CCPA for data privacy, HIPAA for healthcare data, SOC 2 for security controls, and the EU AI Act for high-risk AI applications. The architecture-level requirement is full audit logging of every agent decision, human-in-the-loop for consequential actions, and explainability documentation. Our global AI governance guide for regulated industries walks through each requirement in detail.