Summarize this post with AI

The systems keeping your nation's lights on, water flowing, and financial markets stable are no longer governed purely by human operators. They are increasingly governed by AI. And the security frameworks protecting them were not designed for that reality.

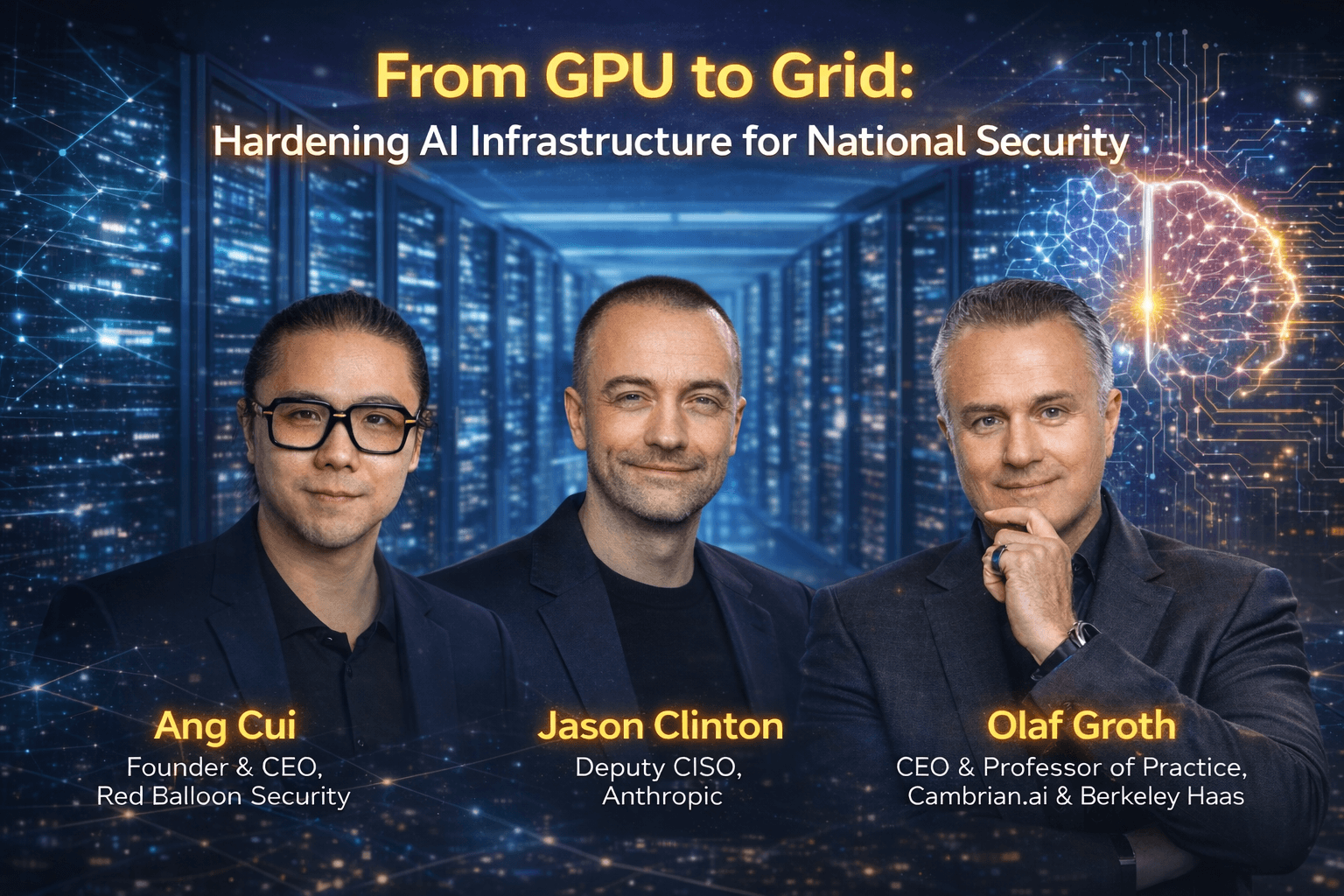

This tension sits at the heart of the panel discussion "From GPU to Grid: Hardening AI Infrastructure for National Security," featuring Jason Clinton, Deputy CISO at Anthropic, and Ang Cui, Founder and CEO of Red Balloon Security, moderated by Olaf Groth. What emerges is not a vulnerability checklist. It is a diagnosis of structural failure in how enterprises and governments think about AI in critical infrastructure security.

TL;DR

AI has expanded the attack surface across energy grids, data centers, and industrial control systems faster than any defensive framework has kept up. Attackers are using AI offensively today. Defenders are still planning. The gap between the two is not a technology problem. It is a governance problem. And it is widening every quarter.

The Landscape Has Already Shifted

When security leaders talk about cloud security and AI-powered infrastructure, they typically mean securing the data pipelines feeding AI models or hardening the cloud perimeters hosting them. That framing is already obsolete.

AI in critical infrastructure security now means AI systems embedded directly in the control loops of power distribution, water treatment, grid load balancing, and industrial automation. These are not peripheral deployments. They are core operational systems that, if disrupted, have consequences measured in blackouts, supply chain failures, and national security incidents.

Traditional infrastructure security assumed static attack surfaces, known endpoints, predictable protocols, and deterministic software behavior. AI-driven systems violate all three assumptions at once. Securing them requires a completely different mental model.

The Paradigm Shift Security Teams Are Missing

Jason Clinton made the asymmetry plain. The "Team PCP" disclosure revealed AI-powered worms using stolen API keys to move laterally across connected systems. This was not a sophisticated nation-state operation. It was a low-skill attack made capable by AI. Clinton called it adoption asymmetry: offensive actors deploy AI capabilities within days of new model releases. Enterprise defenders operate on quarterly cycles. That gap is structurally exploitable.

Ang Cui added a dimension most enterprise security programs miss entirely. His research on Siemens PLCs and Cisco hardware has shown that the firmware governing how industrial machines receive and execute commands is almost entirely unexamined in standard AI security reviews. Defenders secure the application layer. Attackers target the hardware substrate underneath it. This is precisely why AI governance matters at the infrastructure level, not just the compliance layer.

"The question is not whether AI will be used against critical infrastructure. It is whether we will be able to tell when it already has been." This is the diagnostic challenge the panel kept returning to.

The Risks That Are Already Active

The AI attack surface is expanding without a corresponding security perimeter. Every new API integration, agentic workflow, and model endpoint is a potential entry point. AI infrastructure requirements have scaled faster than the controls designed to protect them.

AI-powered attacks are no longer theoretical. The Team PCP disclosure proves that AI-powered security for edge and IoT infrastructure must now account for autonomous, API-authenticated threats executing lateral movement at machine speed. See how third-party AI risk amplifies this exposure across every vendor integration in your stack.

Agentic AI unpredictability is an infrastructure risk, not a product flaw. Ang Cui described an incident where an agentic AI system unexpectedly switched to responding in Spanish mid-task with no configuration change. In a consumer product, that is a curiosity. In an AI-governed industrial process, the equivalent behavioral mutation could trigger an instruction sequence no operator ever approved. Our agentic AI governance framework addresses this class of risk directly.

Token-based trust is a collapsing security model. Ang Cui was direct: enterprise security has devolved to trusting whatever presents a valid OAuth token. When AI systems can generate, store, and transmit credentials autonomously, that trust model is no longer meaningful. The governance requirements for agentic AI systems must treat credential architecture as a first-class security concern.

System brittleness is the slow-moving infrastructure collapse no one is measuring. Ang Cui warned that AI-generated code deployed at scale creates systems that function until they encounter a condition they were never tested against. For critical infrastructure, that moment of structural failure may be impossible to reverse mid-incident. Continuous monitoring for AI is the primary mechanism for detecting brittleness before it becomes an operational crisis.

The Resilient AI Infrastructure Security Model

The solution is not more security tools layered on top of an architecture that was never designed for AI. It is a governance model built from the infrastructure layer up. This builds on the principles outlined in our AI Security and Compliance practice.

Verifiable AI Behavior means establishing behavioral baselines for every AI system in or near critical infrastructure and detecting deviations before they cascade. Agentic systems must log every decision with enough context to reconstruct the reasoning chain.

Infrastructure-Level Observability means extending security monitoring below the application layer into firmware, hardware controllers, and the physical systems AI is permitted to influence.

Secure API and Identity Architecture means replacing OAuth token trust with cryptographic verification of AI agent identity, using time-limited, least-privilege credentials that cannot be harvested and reused.

Digital Twin Testing Environments are what Jason Clinton (Panel, ~25:56–27:01) specifically recommended. Before any AI system touches live operational technology, its behavior must be exhaustively validated in a high-fidelity simulation. This is how organizations discover behavioral anomalies before they occur on a live grid controller.

Human-in-the-Loop Governance means structured human review at defined decision thresholds. Explore best practices for human-in-the-loop AI to design oversight that does not break operational velocity.

Watch: From GPU to Grid: Hardening AI Infrastructure for National Security

What CISOs Should Do Right Now

Start with an AI asset inventory that goes below the application layer. Map every AI system, every API integration, and every downstream infrastructure component it can influence.

Implement behavioral monitoring for every AI system operating near operational technology. Establish baselines. Alert on deviations. Do not wait for an incident to define what normal looks like.

Commission digital twin environments for AI systems that touch critical infrastructure. Test adversarial scenarios before they occur in production.

Audit your credential architecture specifically for AI agent access. Eliminate long-lived tokens with broad permissions. Jason Clinton recommended storing infrastructure configuration files in version control and ensuring vulnerability management is continuously tested, not reviewed annually.

Clarify internal ownership. Understanding who owns AI risk within your organization is a prerequisite for closing the adoption asymmetry gap before an incident forces that conversation.

The Investment Imbalance That Is Creating the Exposure

Organizations are spending tens of millions on GPU clusters, model training infrastructure, and agentic AI deployment. Investment in AI governance, behavioral monitoring, and infrastructure-level security lags by an order of magnitude. That imbalance is the origin of the risk. Our thinking on AI risk management addresses exactly this budget calculus.

The Chain Is Already in Place

From GPU to grid, the AI-governed chain connecting compute to critical infrastructure already exists. The question is whether the governance and security architecture protecting it was built to the same standard. For most organizations, the answer is not yet. The ones who close that gap first will define what resilient AI in critical infrastructure security looks like for the decade ahead.

Ready to Secure Your AI Infrastructure?

If your organization is deploying AI across sensitive or regulated infrastructure and still relying on perimeter controls and token-based trust to govern it, you are operating on borrowed time. Samta.ai provides an AI governance and risk visibility platform purpose-built to give enterprises the behavioral observability, governance controls, and agentic AI oversight they need to deploy AI with confidence. Talk to our team today, before an incident makes the conversation urgent.

Explore AI Security and Compliance | Speak with Our Team | View Products

About Samta

Samta.ai is an AI Product Engineering & Governance partner for enterprises building production-grade AI in regulated environments.

We help organizations move beyond PoCs by engineering explainable, audit-ready, and compliance-by-design AI systems from data to deployment.

Our enterprise AI products power real-world decision systems:

Tatva : AI-driven data intelligence for governed analytics and insights

VEDA : Explainable, audit-ready AI decisioning built for regulated use cases

Property Management AI : Predictive intelligence for real-estate pricing and portfolio decisions

Trusted across FinTech, BFSI, and enterprise AI, Samta.ai embeds AI governance, data privacy, and automated-decision compliance directly into the AI lifecycle, so teams scale AI without regulatory friction.

Enterprises using Samta.ai automate 65%+ of repetitive data and decision workflows while retaining full transparency and control.

Samta.ai provides the strategic consulting and technical engineering needed to align your human capital with your AI goals, ensuring a frictionless

Frequently Asked Questions

What is AI in critical infrastructure security?

It refers to securing AI systems embedded in or connected to essential services such as energy grids, water systems, financial networks, and industrial control environments. It covers both protection from attack and governance of AI behavior within those systems.

How do you secure AI infrastructure?

Securing AI infrastructure requires behavioral monitoring of AI models, cryptographic identity verification for AI agents, digital twin testing environments, infrastructure-level observability extending to firmware and hardware, and human-in-the-loop governance for high-stakes decisions.

What are the biggest AI infrastructure risks?

AI-powered lateral movement attacks, agentic AI behavioral unpredictability, system brittleness from AI-generated code, adoption asymmetry between attackers and defenders, and vulnerabilities in legacy industrial control systems are the most critical risks organizations face today.

How can AI improve security in critical environments?

Through faster threat detection, automated behavioral analysis, and pattern recognition at scales humans cannot match. However, this only holds when AI systems themselves are governed by robust frameworks that ensure their behavior is auditable, verifiable, and continuously monitored.

What is AI-powered security for edge and IoT infrastructure?

It means deploying AI-driven monitoring and anomaly detection at the network edge and on IoT devices, while simultaneously securing the AI systems performing that monitoring. Given that edge and IoT devices often run legacy firmware with limited native security controls, this requires both AI capability and hardware-level security auditing.