Summarize this post with AI

Enterprises don't fail at AI ethics. They fail at operationalizing responsible AI. Every major organization deploying AI today has a principles document. Most have an ethics committee. A handful have a governance policy buried in a SharePoint folder that nobody reads. What almost none of them have is a functioning, evidence-backed, audit-ready system that proves their AI is actually doing what they claim. That gap between stated values and operational reality is where regulatory exposure lives, where trust erodes, and where the next AI incident is quietly waiting to happen.

TL;DR: 5 Unignorable Insights

Ethical AI is the "why." Responsible AI is the "how." Trustworthy AI is the evidence. Confusing these three is the root of most governance failures.

Audit readiness requires artifacts, not attitude. Risk registers, model cards, and impact assessments are non-negotiable, not nice-to-haves.

Accountability must be named, not assumed. One human being owns each AI decision. RACI charts make this explicit.

Governance embedded into CI/CD pipelines beats governance committees by every measurable metric. Static oversight kills velocity. Continuous governance scales it.

Start with Minimum Viable Governance. A maintained AI system inventory plus clear decision ownership gets you further than a 200-page policy framework.

The Three Terms Everyone Misuses

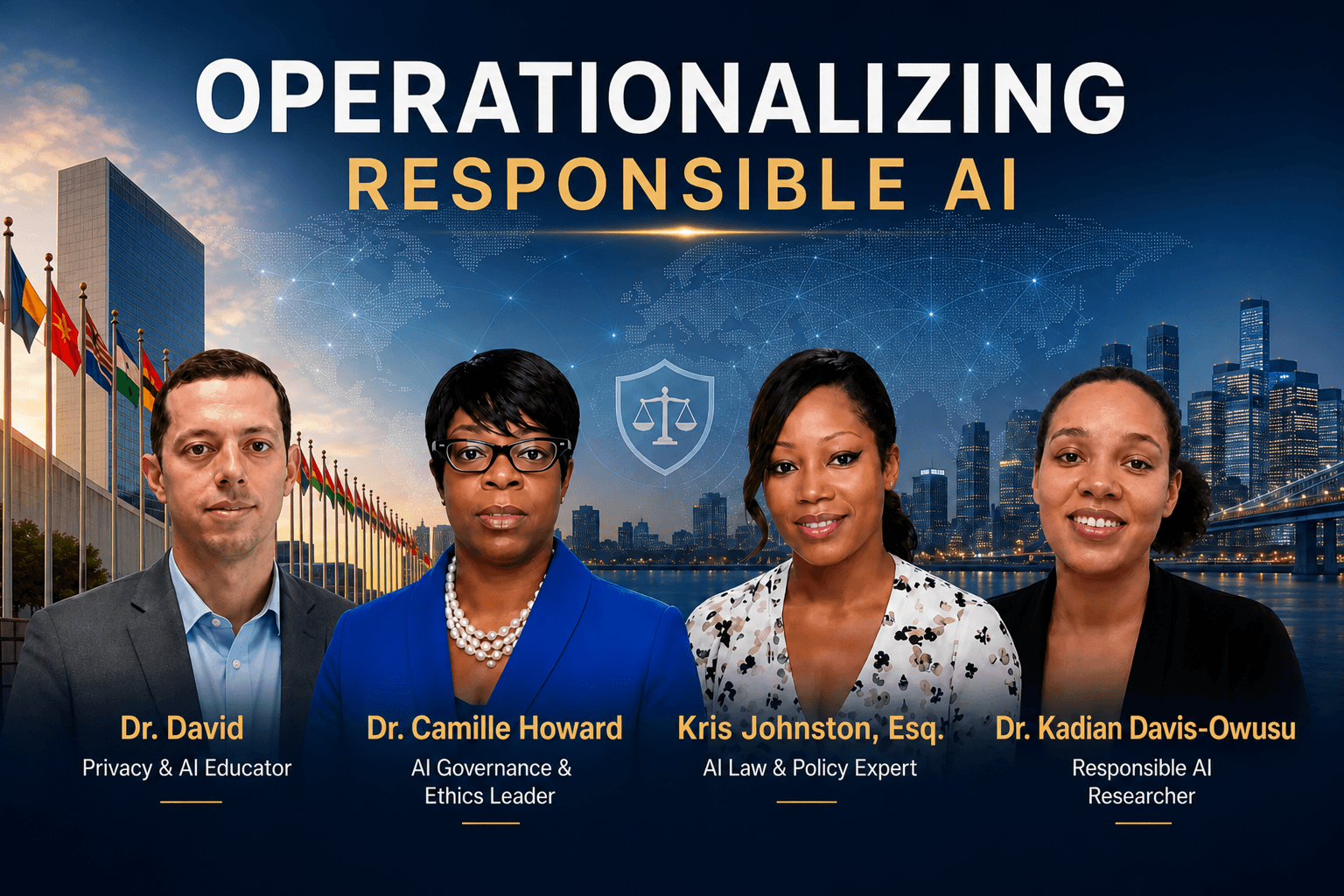

During the "Operationalizing Responsible AI" panel, the conversation opened with a deceptively simple question from host Dr. Kyle David: what exactly is the difference between Responsible AI, Ethical AI, and Trustworthy AI? The answers restructured the entire framing of enterprise AI governance strategy.

Dr. Camille Howard, an ethics and compliance leader specializing in governance-first approaches, defined Responsible AI through three operational pillars: AI impact assessments, technical documentation, and governance checkpoints. For her, responsibility is structural, not philosophical it lives in process design, not mission statements.

Kris Johnston, Esq., Head of Privacy and AI Governance at Affinity, drew the sharpest line: Ethical AI is the "why" the values and principles an organization holds about AI. Responsible AI is the "how" the operational muscle, the controls, the processes that enforce those values in practice. And Trustworthy AI is the outcome: the evidence that the system is actually safe and reliable, verified externally, not asserted internally.

Dr. Kadian Davis-Owusu, an AI governance researcher and educator, anchored Responsible AI to governance structure and Ethical AI to the human-centered moral principles that guide design. Her framing is critical for understanding why the difference between ethical AI and responsible AI is not semantic it is the difference between aspiration and execution.

Watch: Operationalizing Responsible AI

Featuring Dr. Kyle David (Host), Dr. Camille Howard, Kris Johnston Esq., and Dr. Kadian Davis-Owusu.

"Responsible AI is the operational muscle the controls and processes not just the values. Trustworthy AI is the outcome: the evidence that proves the system is safe." Kris Johnston, Esq., Head of Privacy and AI Governance, Affinity

The Problem Is Not Ethics. It Is Infrastructure.

Most enterprises don't have a shortage of AI ethics. They have a shortage of AI execution infrastructure. The responsible AI implementation framework conversation in most boardrooms ends at policy. What it should produce is an operational system: documented, monitored, accountable, and audit-ready. This is the core shift the panelists collectively described from governance as documentation to governance as living infrastructure.

Kris Johnston introduced a phrase during the panel that should appear on every CISO's wall: "Trust me bro AI." This is the category of AI deployment where governance exists on paper but not in evidence. An auditor asks for proof. The response is a shrug and a principles deck. No risk register. No model cards. No impact assessments. No logs of governance checkpoints passed. This is not responsible AI. It is compliance theater wearing an ethics costume.

What auditors actually need: risk registers that document identified AI risks and mitigations, model cards that describe a model's intended use, training data, known limitations, and evaluation results, AI impact assessments that evaluate potential harms before deployment, and governance checkpoint logs that prove review actually happened. Samta.ai's AI Security and Compliance Services are built specifically to produce and maintain this evidence layer at enterprise scale.

Dr. Kadian Davis-Owusu gave concrete industry examples that make this tangible: in hiring tools, bias testing must be documented and repeatable a direct application of Samta.ai's Tatva AI Hiring Assessment Platform, which embeds fairness validation into every assessment cycle. In healthcare AI, clinical validation evidence must exist before any deployment decision. The AI risk management framework is not abstract it produces artifacts an auditor can examine, a regulator can challenge, and a board can rely on.

Accountability: The Hardest Problem in AI Governance

Dr. Camille Howard made a point that reshapes how enterprises should think about AI accountability in organizations: the business owns the decision. Not the data science team. Not the vendor. Not the AI. A single, named human being typically the product owner is accountable for every consequential AI output in a production system. The moment that accountability becomes diffuse, it disappears entirely.

Kris Johnston, Esq. formalized this with two tools that every enterprise deploying AI should immediately adopt. First, the RACI model mapping who is Responsible, Accountable, Consulted, and Informed for each AI system and each category of decision it makes. Second, the three lines of defense model borrowed from financial risk management: the business unit operating the AI as the first line, risk and compliance functions as the second, and internal audit as the third. These are not new concepts. Applying them systematically to AI is new, and it is overdue.

The accountability problem is also a culture problem. When an AI system makes a wrong call, organizations instinctively ask what went wrong with the model. The right question is who owned that decision, and what was the review process. Decision accountability, not abstract ethics, is what gives leadership teams something actionable to present to a board. Samta.ai's AI Governance and Risk Management services operationalize exactly this accountability architecture across enterprise AI portfolios.

The Responsible AI Execution Framework (RAEF)

Five operational pillars that convert AI principles into audit-ready enterprise infrastructure, based on insights from the "Operationalizing Responsible AI" panel.

Pillar 1: Accountability Mapping Deploy RACI roles for every AI system. Name a single accountable owner, typically the product or business owner. Apply the three lines of defense model to create structural oversight without creating bottlenecks. Samta.ai's AI Governance Framework 2026 guide walks through exactly how to implement this across complex enterprise portfolios.

Pillar 2: Auditability Systems Build the evidence layer: risk registers, model cards, AI impact assessments, and governance checkpoint logs. Every AI system in production must have a paper trail that survives regulatory scrutiny. Start with Samta.ai's AI Risk Assessment Templates and the full AI Risk Management Framework. The Veda AI Data Analytics Platform provides the real-time visibility layer that makes this evidence collection continuous rather than periodic.

Pillar 3: Responsible AI by Design Weave governance controls directly into CI/CD pipelines. As Kris Johnston argued, governance that lives outside the development process will always be bypassed. Responsible AI by design means the guardrails are baked in, not bolted on after deployment. Samta.ai's Workflow Automation Consulting helps engineering teams embed compliance gates into existing development workflows without disrupting delivery velocity.

Pillar 4: Continuous Monitoring Static governance is dead on arrival. Dr. Kadian Davis-Owusu emphasized continuous model drift monitoring and performance tracking as non-negotiable for any responsible AI deployment. Models decay. Data distributions shift. Governance must track both in real time. Samta.ai's Veda AI Analytics Platform delivers continuous model performance monitoring with automated alerting when drift thresholds are crossed. Read more in Why Model Lifecycle Management Matters.

Pillar 5: System Inventory and Visibility You cannot govern what you cannot see. Maintaining a comprehensive AI system inventory knowing what models are in production, what decisions they influence, and who owns them is the foundation of any operationalizing responsible AI framework. No inventory means no governance, regardless of how impressive the policy document looks. Samta.ai's Digital Transformation Managed Services include full AI system cataloguing and ongoing inventory management as core deliverables.

How to Actually Start: Minimum Viable Governance

All four panelists converged on the same practical advice when Dr. Kyle David asked where enterprises should begin: start with an MVP a Minimum Viable Governance posture. Not a 300-page policy. Not a new committee. Not a multi-year transformation program. Two concrete actions that have immediate impact on how to implement AI governance in enterprises: build an AI system inventory so you know what is deployed, and assign a named accountable owner to each system so you know who is responsible for each decision.

Dr. Camille Howard's additional prescription was equally sharp: replace slow, static governance committees with fluid, continuous governance structures embedded in the teams that actually build and operate AI systems. The operationalizing responsible AI ethics challenge is not a knowledge problem. Organizations know what good governance looks like. The failure is always in the execution infrastructure the systems, roles, tools, and checkpoints that make governance operational rather than aspirational.

Enterprises ready to move from principles to execution can benchmark their current posture using Samta.ai's AI Readiness Assessment, review the Agentic AI Governance Framework for next-generation deployment governance, and access the EU AI Act Readiness Guide to understand incoming regulatory requirements that will make governance infrastructure mandatory, not optional.

"Governance that lives outside the development process will always be bypassed. Responsible AI by design means the guardrails are baked in." Kris Johnston, Esq.

The Investment Gap That Defines Enterprise AI Risk

There is a structural imbalance in how enterprises allocate AI investment. Organizations are spending millions on model development, data infrastructure, and AI product teams. They are spending almost nothing on governance systems, monitoring infrastructure, and audit readiness tooling. This is the equivalent of building a high-performance aircraft and treating the maintenance program as a discretionary budget line.

The enterprise AI governance strategy conversation must move from governance as a cost center to governance as a risk management investment one that protects the value of every AI deployment made. Regulatory pressure is accelerating this calculus. The EU AI Act, evolving SEC guidance on algorithmic accountability, and sector-specific requirements in financial services and healthcare are converting governance from best practice to legal obligation.

Enterprises that treat AI compliance as optional are accumulating regulatory liability at the same rate they are deploying AI systems. These two curves will intersect. The organizations without audit-ready governance infrastructure will bear the full cost of that collision. The path forward is not to slow down AI adoption it is to build the operational backbone that allows AI to scale responsibly, where every deployment has evidence, every decision has an owner, and every model has a monitor. That is not an ethics commitment. It is an operational imperative.

The Execution Gap Is the Only Gap That Matters

The "Operationalizing Responsible AI" panel delivered one verdict that every CISO, CTO, and AI leader needs to hear before their next board meeting: your organization does not have an ethics problem. It has an execution problem. Dr. Camille Howard, Kris Johnston, Esq., and Dr. Kadian Davis-Owusu all pointed to the same truth principles without operational infrastructure are not governance, they are decoration. The enterprises that will lead the next phase of AI adoption are not the ones with the most sophisticated models. They are the ones who can prove, with evidence, that their AI systems are trustworthy, accountable, and continuously monitored. Operationalizing responsible AI is not the barrier to innovation. It is the only foundation on which sustainable AI innovation can be built. The time to build that foundation is not after the audit. It is now.

Most enterprises discover their AI governance gaps when a regulator asks a question they cannot answer or an AI system fails in a way nobody can explain. By then, the cost of remediation is ten times the cost of prevention. Samta.ai's AI governance platform, AI Security and Compliance Services, Veda AI Analytics Platform, and Tatva Hiring Assessment Platform give enterprises the audit-ready infrastructure, real-time monitoring, and accountability architecture they need to deploy AI with confidence not just intent.

Request a Free Product Demo with Samta.ai and see exactly how your current AI governance posture holds up against a real regulatory audit scenario, with a concrete remediation roadmap delivered at no cost.

About Samta

Samta.ai is an AI Product Engineering & Governance partner for enterprises building production-grade AI in regulated environments.

We help organizations move beyond PoCs by engineering explainable, audit-ready, and compliance-by-design AI systems from data to deployment.

Our enterprise AI products power real-world decision systems:

TATVA : AI-driven data intelligence for governed analytics and insights

VEDA : Explainable, audit-ready AI decisioning built for regulated use cases

Property Management AI : Predictive intelligence for real-estate pricing and portfolio decisions

Trusted across FinTech, BFSI, and enterprise AI, Samta.ai embeds AI governance, data privacy, and automated-decision compliance directly into the AI lifecycle, so teams scale AI without regulatory friction.

Enterprises using Samta.ai automate 65%+ of repetitive data and decision workflows while retaining full transparency and control.

Samta.ai provides the strategic consulting and technical engineering needed to align your human capital with your AI goals, ensuring a frictionless.

Frequently Asked Questions

What is operationalizing responsible AI?

Operationalizing responsible AI means converting AI ethics principles into functioning operational systems: accountability structures, audit evidence, continuous monitoring, and governance controls embedded in development pipelines. As Kris Johnston defined it on the panel, it is the "how" that transforms the "why" of ethical AI into the demonstrated outcomes of trustworthy AI.

How do enterprises implement AI governance in practice?

The panelists recommend starting with Minimum Viable Governance: build an AI system inventory, assign named accountable owners using a RACI model, create model cards and risk registers for each production system, and integrate governance checkpoints into CI/CD pipelines. Samta.ai's AI Governance Framework 2026 provides a step-by-step implementation guide.

What is the RACI model in AI accountability?

RACI maps four roles for each AI system: who is Responsible for operating it, who is Accountable for the decisions it makes, who is Consulted during governance reviews, and who is Informed of outcomes. Combined with the three lines of defense model, it creates a complete AI accountability architecture that eliminates diffuse ownership.

What does AI audit readiness actually require?

Audit readiness means having evidence, not just assertions: documented risk registers, model cards, AI impact assessments, and governance checkpoint logs. Samta.ai's AI Risk Assessment Templates and AI Security and Compliance Services provide the tooling to build and maintain this evidence layer continuously.

What is Responsible AI by Design?

Responsible AI by design means embedding governance controls directly into CI/CD pipelines and development workflows rather than applying them as external reviews after development is complete. Kris Johnston described it as governance that is baked in, not bolted on ensuring every model that reaches production has passed documented, verifiable governance checkpoints without slowing the development team down.